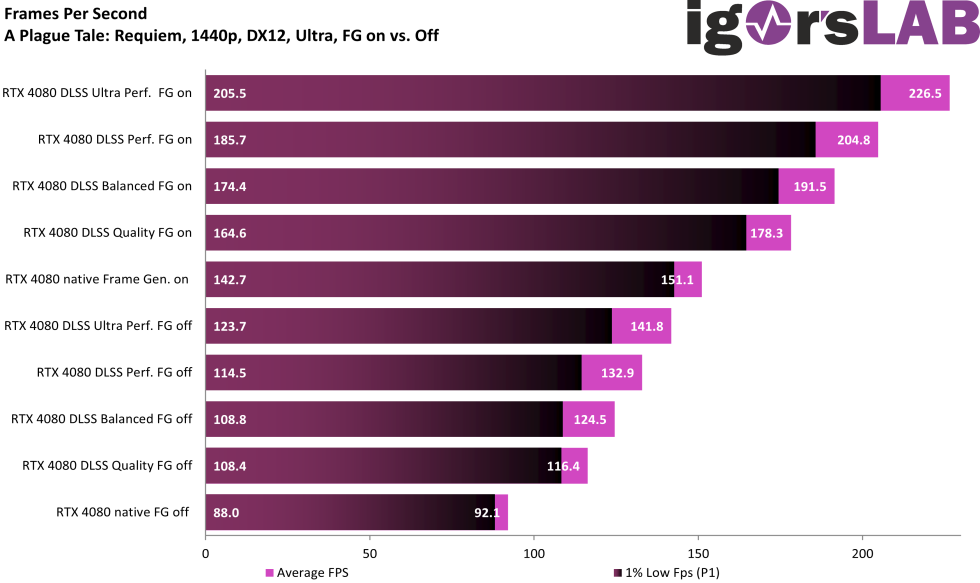

Average FPS

The game really surprised me, because so far – even in 1440p – there is no CPU bottleneck to be found. We actually have to test in 1080p. DLSS 3.0 FG increases performance by a factor of 2.4. Not bad..

Frame Times, Variances and Percentiles

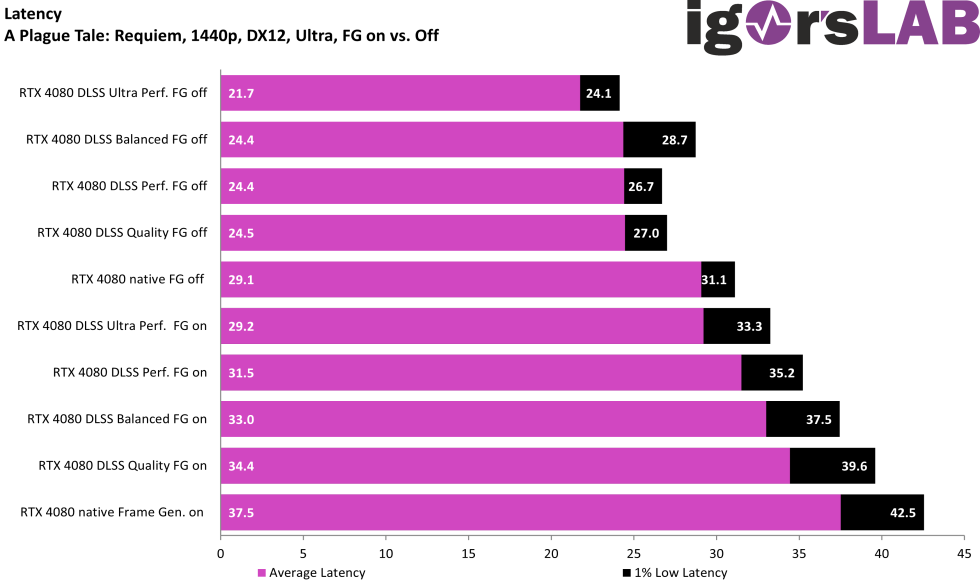

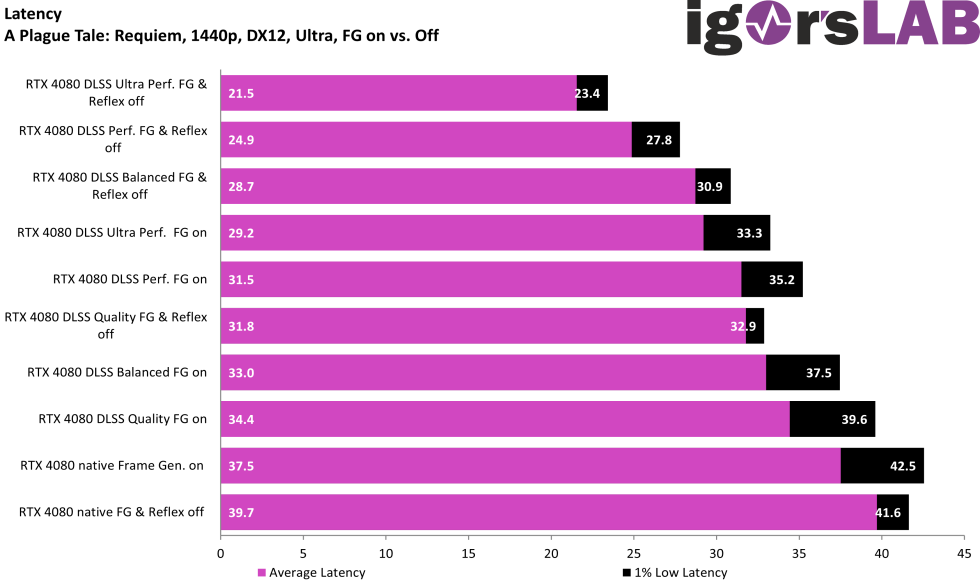

Latencies

There is one thing I wanted to show you graphically. DLSS also has its problems, not all that glitters is wood…

DLSS Performance @ 1440p

DLSS Ultra Performance @ 1440p

Starting at the Ultra Performance level, DLSS is also no longer recommended in almost any game. This mode probably only serves the purpose of having the longest bar at the end if possible. From my point of view, it makes no sense at all, since you can’t and don’t want to play that way anyway. This looks like crap even in UHD and in 1080p – let’s not… To the ladies and gentlemen from NVIDIA: In my opinion, an unusable DLSS mode should not even exist. My opinion: either make it usable – or leave it out!

Greetings also go to AMD at this point: the FSR 2.1 Ultra Performance mode (e.g. in Cyberpunk or Spider-Man) also looks so modest. Here, too, the rule is: do better – or leave out!

- 1 - Einführung und Testsystem

- 2 - Cyberpunk 2077 @ 2160p

- 3 - Cyberpunk 2077 @ 1440p

- 4 - Cyberpunk 2077 @ 1080p

- 5 - A Plague Tale: Requiem @ 2160p

- 6 - A Plague Tale: Requiem @ 1440p

- 7 - A Plague Tale: Requiem @ 1080p

- 8 - Bright Memory: Infinite @ 2160p

- 9 - Bright Memory: Infinite @ 1440p

- 10 - Bright Memory: Infinite @ 1080p

- 11 - Spider-Man Remastered @ 2160p

- 12 - Spider-Man Remastered @ 1440p

- 13 - Spider-Man Remastered DLSS vs. FSR vs. XeSS

- 14 - Zusammenfassung und Fazit

87 Antworten

Kommentar

Lade neue Kommentare

Mitglied

Mitglied

1

Moderator

Urgestein

Veteran

Urgestein

Veteran

Moderator

Veteran

Moderator

Veteran

Veteran

Mitglied

Veteran

Veteran

Alle Kommentare lesen unter igor´sLAB Community →