Summary

I’m not entirely sure what NVIDIA was thinking in detail with this card, but when it comes to the mainstream, they seem to have been in quite good spirits until the launch of the GeForce RTX 4060 Ti. They certainly didn’t expect all the headwind with the 8 GB VRAM back then, so I’m not surprised that such marketing pirouettes were performed and marketing stunts were celebrated in the run-up to today’s launch.

I will certainly not go into the always soggy memory now, because then I would only repeat myself. The GeForce RTX 4060 8 GB also has positive sides, but you have to be able to accept them. It is almost 11% faster in Full HD than a GeForce RTX 3060 12 GB, and it is still 8% faster in Ultra HD. That might not sound much, but I used a gaming mix that is less prone to cherry-picking. So, the 11 percent is always in there in Full HD, even outside of the ordered YouTube show.

AMD’s Radeon RX 7600, which also belongs to the VRAM-hungry club, is also easily beaten in pure rasterization and that with considerably less thirst. This is also on the plus side of the GeForce RTX 4060, which could even be attractive to a certain extent if the price wasn’t so exorbitant. I know from some board partners that they actually wanted to offer the card cheaper, but NVIDIA insisted on the 299 USD as MSRP (329 Euros as RRP in Germany), for whatever reason. I rather see the card at 289 as the entry price instead of the fixed 329 Euros. That definitely won’t hold up in this form, or the cards will become lead on the shelves.

The market definitely needs something new for the mainstream, so the card definitely fits as a Full HD offering and technology carrier. I find as DLSS practical and the complete feature set of Ada cards, even away from all the gaming. But the sheer fear of cannibalizing the GeForce RTX 3060 12 GB owned with it, which you keep running until Q3 for some inexplicable reason, unfortunately cements the price where you genuinely can’t pick up buyers.

And a bit of harsh criticism must also be allowed at this point. That a current card in idle performs such capers (why and how ever) and the driver has still not been fixed after days, is completely beyond my understanding. Especially since the unnecessary omission of the exact input measurement with shunts and clean monitoring leads to the cards consuming much more power under load than they should. It is even more surprising that all programs get much too low values via NVAPI, which have nothing to do with the actual power consumption, but interestingly correspond to the values of the own marketing. The reference board has been designed in such a cost-optimized way that the whole thing simply had to go wrong. If the board partners then also orient themselves to this new “modesty”, then it will be even tighter for efficiency.

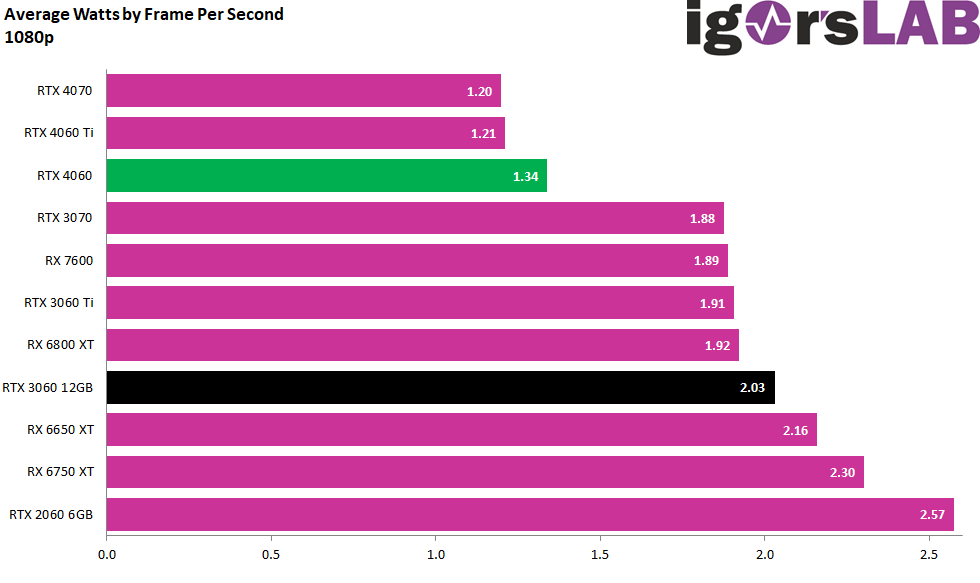

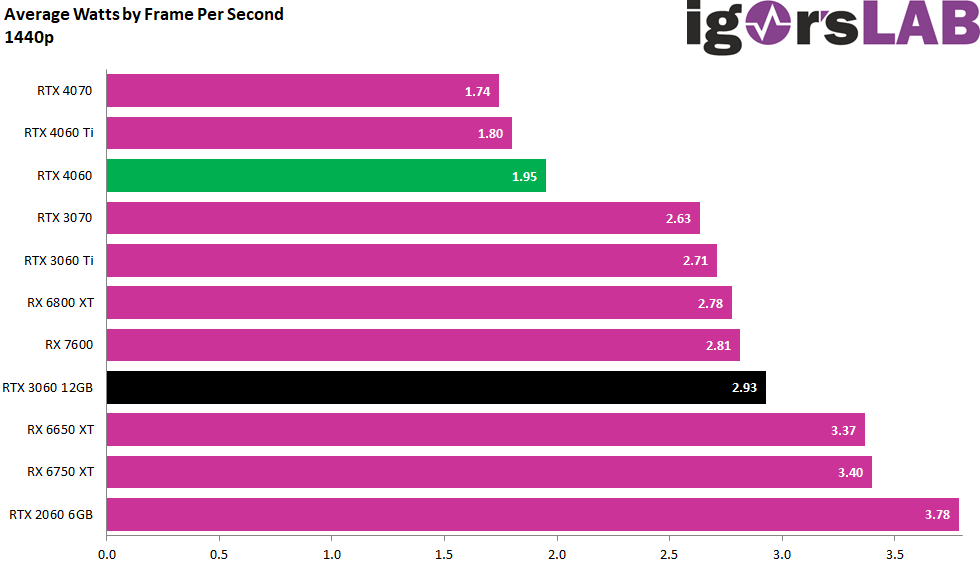

Because the efficiency observations also show us vividly that the GeForce RTX 4060 8 GB falls very clearly behind an RTX 4060 Ti or RTX 4070. This is not only due to the chip itself, but primarily to the boards and the technical implementation of the voltage converters. Everything that has already been converted into heat on the way to the end user is missing in the GPU later on. I can already spoil that a more lovingly designed OC card is a bit better in terms of efficiency, even though it was overclocked properly. But we’ll have more on that tomorrow, since we, as normal testers, are unfortunately not allowed to anticipate.

The GeForce RTX 4060 8 GB is a card for Full HD when it comes to higher frame rates and is also conditionally suitable for WQHD. But then you will have to think about smart upscaling because it gets a bit tight in places even in QHD without DLSS. NVIDIA can definitely use its advantages here, which DLSS 2.x also offers optically. However, if a game supports DLSS 3.0 and you would be stuck in the unplayable FPS range without Super Sampling, then this can even be the ultimate lifeline for playability. You can’t improve the latencies with this (they stay the same), but not every genre is as latency-bound as various shooters.

Thus, you get all the advantages of the Ada architecture starting at 329 Euros (MSRP cards). However, the outdated memory expansion and the narrow memory interface are disadvantages. The 8 lanes on the PCIe 4.0 are completely sufficient, but when the card has to access the system memory, it gets very tight on older systems with PCIe 3.0 at the latest. Then the 11 percent advantage over the RTX 3060 12GB is gone faster than you can say pug.

And if you ask me for a personal conclusion: It turns out slightly different than what was ordered in advance by certain YouTubers. Not absolutely negative, because I have mentioned the positive sides. But it is not really euphoria, because the card simply costs too much and does not quite deliver what was promised in advance. Drivers can be fixed, no problem. Only VRAM and telemetry are a bit more complicated.

The graphics card was provided by Palit for this test. The only condition was the compliance with the lock period, no influence or compensation took place.

- 1 - Introduction, technical data and technology

- 2 - Test system in the igor'sLAB MIFCOM PC

- 3 - Teardown: PCB, components and cooler

- 4 - Gaming performance FHD (1920 x 1080)

- 5 - Gaming performance WQHD (2560 x 1440)

- 6 - Gaming performance DLSS vs. DLSS3 vs. FSR

- 7 - Latency and DLSS 3.0

- 8 - Power consumption and load balancing

- 9 - Transients, cutting and PSU recommendation

- 10 - Temperatures, clock speeds, fans and noise

- 11 - Summary and conclusion

44 Antworten

Kommentar

Lade neue Kommentare

Neuling

1

Neuling

Neuling

Urgestein

Moderator

Urgestein

1

Urgestein

Urgestein

Veteran

Mitglied

1

Mitglied

Urgestein

Urgestein

Urgestein

Mitglied

1

Alle Kommentare lesen unter igor´sLAB Community →