What is spatial hearing?

Humans have (ideally) two ears, between which the head sits in the middle as an acoustic barrier. But how do we actually hear spatially and are we able to localize acoustic events and assign them to a specific location? This happens in two ways: The respective transit time differences (i.e. when exactly the sound reaches the respective ear) and the intensity differences (differences in sound pressure level).

However, one thing must not be ignored: Usable information about the spatial location of a sound source based on intensity and time-of-flight differences can only be recognized and processed by the ears and brain if the sound as such also changes in content (sudden occurrence, spectrum, level, etc.). For example, the background noise in forests or a large city can hardly be differentiated spatially if you are in the middle of it. The more violent or faster a change occurs, the easier it is to localize the sound source.

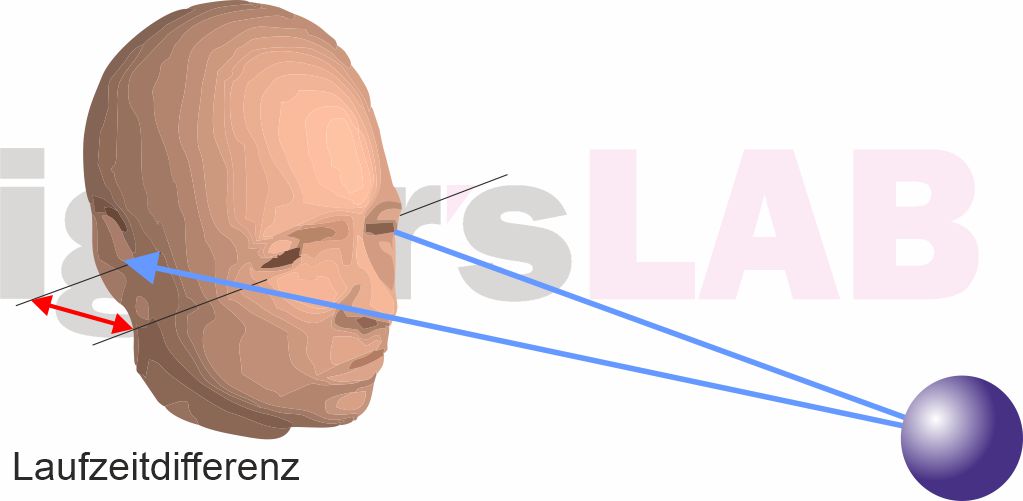

Time difference

The transit time difference is the time difference required for sound waves from an event to reach both ears. If the source is not frontal (at least 3° off), the sound will logically reach the closer ear earlier than the other (see illustration). This time difference is therefore dependent on the different distances that the sound has to travel to reach the ears and the human ear is able to perceive even the smallest time differences of 10 to 30 µs!

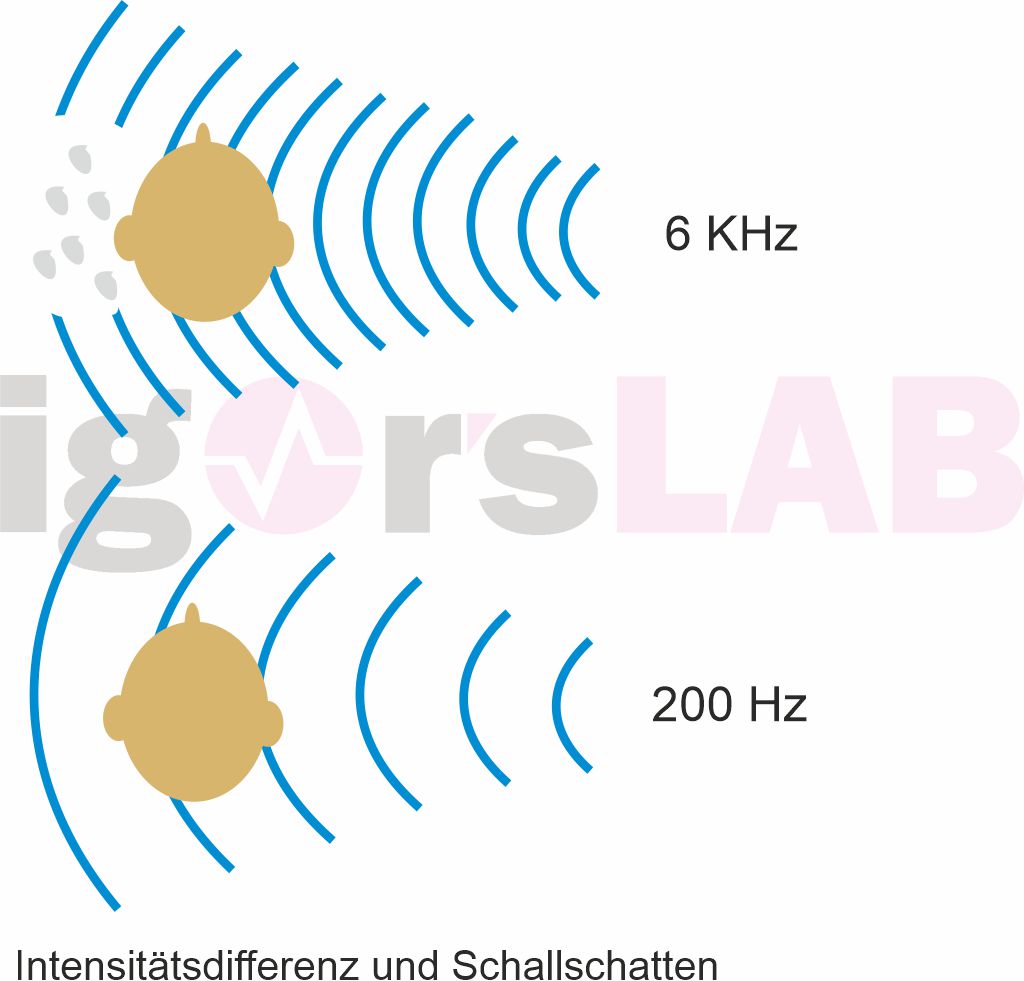

Intensity difference

A possible intensity difference (level difference) always occurs when the wavelength of the incident sound is small enough in comparison to the head and therefore reflections occur that cause the head to become an obstacle. As can be clearly seen in the illustration, a so-called acoustic shadow is then created on the opposite side. However, this effect only occurs at frequencies above approx. two kHz and intensifies as the frequency increases. For the longer wavelengths of the lower tones, however, a head is no longer an obstacle.

Locating an acoustic event: localization

If an acoustic event occurs outside the head – i.e. generated via loudspeakers, for example – this is known as localization, as the evaluation of information from the ears enables the brain to precisely locate the origin of the event.

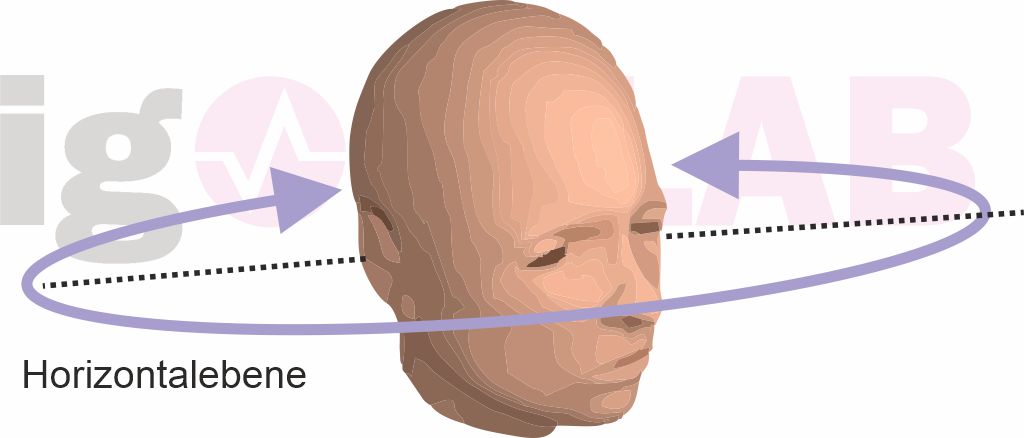

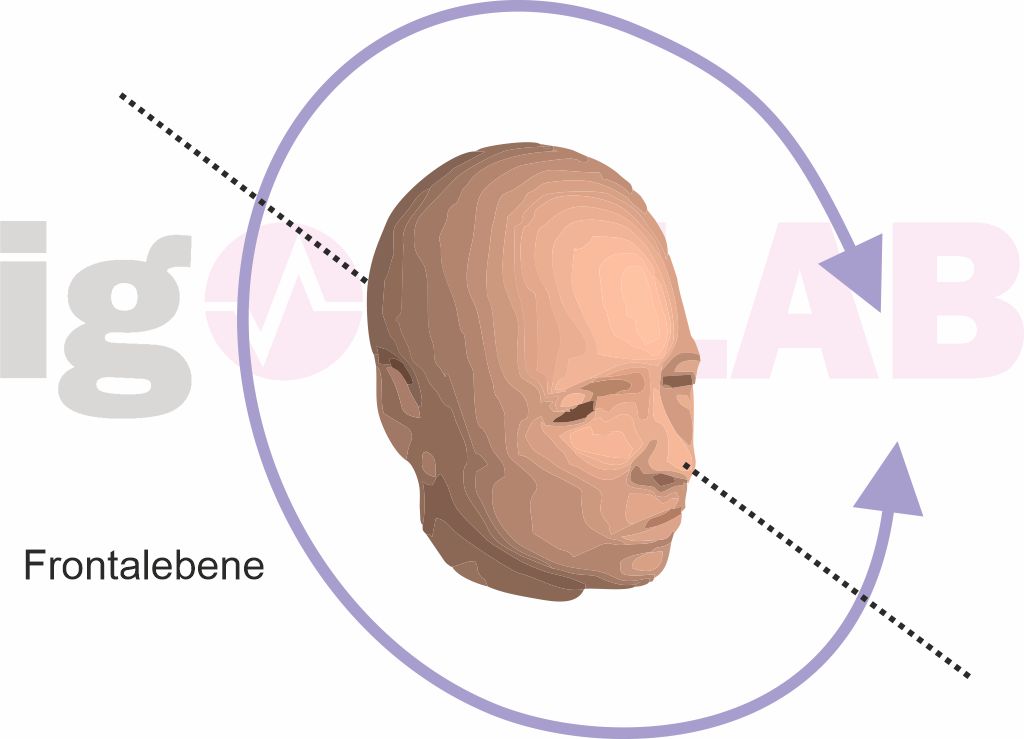

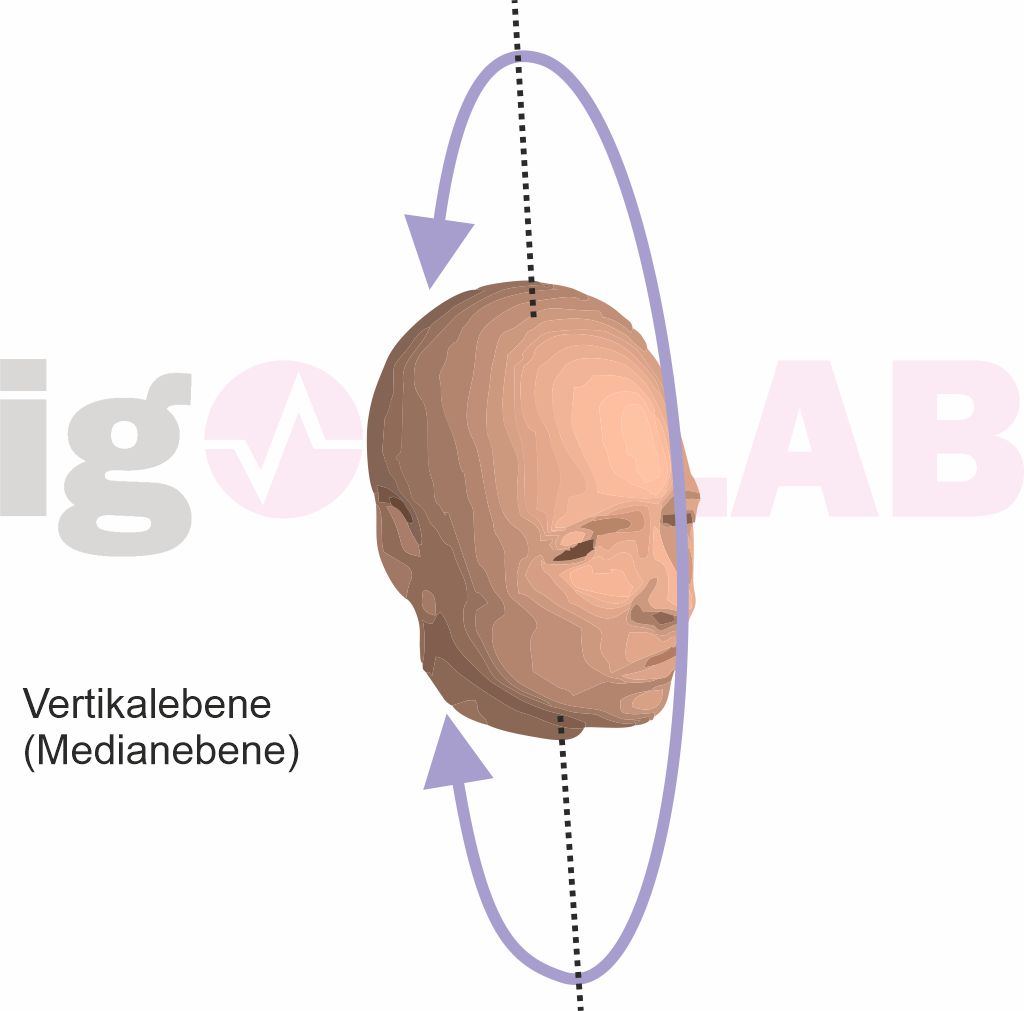

Incidentally, the head is also unconsciously always in motion for precise spatial localization, so that turning, lifting, lowering or tilting enables localization across all three planes (X, Z and Y). In this case – but only then – we can speak of real 3D sound (three-dimensional), which can no longer be produced with normal loudspeaker setups, which are all at more or less the same height!

Special features of headphones

When using headphones, however, the perception of the stimulus always occurs directly in the head! As soon as the sound waves generated by headphones are synchronized, the sound source is perceived as if it were located in the middle of the head – i.e. the median plane!

Lateralization is the apparent movement of the sound source from the center of the head to one side. This headphone-typical “wandering” of a supposed sound source is in turn caused – as already explained at the beginning – by a time difference (signals are played with a time delay) or intensity difference (differences in volume). Ears are almost never completely identical, so if the sensitivity of the two ears is different, there will be a lateralization towards the better ear! This is why an exact balance setting is always the first step towards optimizing the listening impression.

Spatial sound with headphones – trick or voodoo?

But much more happens during lateralization! Shifting the phases, i.e. the moment at which a wave hits the ear, can also lead to supposed shifts in position. The pinna itself plays a major role in the localization of acoustic events, acting both as a sound catcher and as a filter. The incoming sound signals are linearly distorted in different ways depending on the direction of the sound and the distance from the sound source. The shape of the pinna alone influences how a sound wave bounces off the outside and enters the pinna (and further into the eardrum). The hair on the pinna also plays a role in this!

So, what does all this have to do with headphones? Everything and nothing! True three-dimensional hearing also requires the head to move on all axes. Something that is simply not technically possible with fixed binaural headphones! Things like distinguishing the actual positioning on the Z-axis in front of or behind the head or on the Y-axis below or above the head cannot be reproduced with one-dimensional headphones!

Even if the software works with phase shifts, time and level manipulation and a change in the frequency spectrum (everything from behind simply sounds a little deeper or duller) – it is not a real surround sound, even if the brain helps a little here: It is based on listening experiences from real life and can therefore be manipulated (from time to time).

What are the benefits of multiple drivers per ear cup?

Headphones with several drivers mounted at different angles can help with two-dimensional imaging, as the sound hitting the earcups at different angles can create a sense of “surround sound”, depending on your hearing perception and listening experience. However, the disadvantage of such systems often lies in the imponderables of multiple sound generation, because the drivers influence each other negatively due to the very close positioning (phase shift, cancellation). Music can no longer be enjoyed with such systems and linear reproduction across the widest possible spectrum is also difficult.

There are now some useful multi-driver headsets on the market that can achieve such an illusion quite convincingly. But even in this case, such a perception is absolutely subjective in its interpretation and can never be transferred across the board to other people.

5.1.1 or 7.1 sound on headphones is therefore always a matter of imagination or is only possible with the help of the brain through personal listening experience – and even then is at most two-dimensional. True 3D does not even exist with loudspeakers, because only the X and Z axes are reproduced.

And what is the real advantage?

Personally, I prefer very good stereo headphones that provide a differentiated and detailed resolution. Not only the reproducible frequency range and its linear character play a major role here, but also the ability of the system not only to cleanly resolve the content of a single sound source, but also to realize this even when several (or many) acoustic events are mixed or occur simultaneously.

In hi-fi jargon, the separation of individual sources and their precise spatial localization within a large overall acoustic image is often referred to as a “stage”, the “width” of which is intended to describe the good spatial imaging ability of a headphone and its resolution capabilities. If all this is missing, it sounds muddy and undifferentiated. If, for example, noises and sounds are mixed into such an acoustic mush, the spatial imaging collapses like a house of cards.

Can anyone really “hear” this?

All tests with recorded surround material were repeated several times with a total of six test subjects (3x each) aged between 16 and 50 and with different headsets in random order. Only two of the test subjects were able to correctly identify the sources with the “real” surround headsets, three were at least partially correct and one could only guess. With the virtual surround headsets with only one driver per shell, only two people wanted to have “perceived anything at all” – but nobody was really error-free.

The more complex and louder the sound carpet was, the higher the error rate! Incidentally, none of the test subjects in the blind test knew whether a headset with one or up to three drivers ( bass) was used per shell. Interestingly, two people were also able to confirm that the stereo reference headphones occasionally produced surround sound. This once again proves that everything happens in the brain and that the question cannot really be answered with a blanket yes or no answer.

Ergo: Imagination is also an education.

Before trying to think about the so-called “surround sound” including the promotional Dolby certificates, a headphone should first of all have basic things such as a good resolution of the individual acoustic events over the widest possible frequency spectrum, a linear reproduction of this spectrum, a transient response that is as inconspicuous as possible and a high level stability. Then the skills come almost automatically.

Very good 5.1 systems with several drivers per ear cup can realize the spatial imaging as an illusion with the help of the brain, but then usually suffer from the filigree resolution and clean reproduction in complex scenes with many simultaneously occurring sound sources.

- 1 - Fragestellung: Marketing oder echter Vorteil?

- 2 - Räumliches Hören und jede Menge Voodoo

- 3 - Von Tönen, Klängen und Geräuschen

- 4 - Analysiert: Die menschliche Sprache

- 5 - Analysiert: Schritte und Bewegungen

- 6 - Analysiert: Schusswaffengeräusche und Explosionen

- 7 - Analysiert: Transportmittel und örtliche Situationen

- 8 - Zusammenfassung und Fazit

23 Antworten

Kommentar

Lade neue Kommentare

Urgestein

1

Mitglied

Urgestein

Urgestein

1

Mitglied

1

Veteran

1

Urgestein

Mitglied

Veteran

Urgestein

Urgestein

Urgestein

Veteran

Urgestein

Alle Kommentare lesen unter igor´sLAB Community →