The fact that you should use your RAM at least in dual-channel if possible has fortunately become widely accepted in the industry today. However, unlike channels, ranks are still talked about very rarely, if at all. Many publications, some of them very well known, prefer instead to talk about “sticks” or modules affecting performance, which is not only wrong but also causes confusion and disinformation in the community. Today we want to examine some of these “myths” and correct them if necessary.

Single-Rank vs. Dual-Rank vs. Quad-Rank – explained in theory and proven with benchmarks

First we need to take a quick look at the theory behind memory channels and ranks, but don’t worry, I’ll keep it really short. Today’s desktop CPUs usually have two channels, i.e. two 64-bit connectors, to exchange data with the main memory. Since these two channels are used in parallel, the bandwidth and thus the throughput is effectively doubled compared to single channel, from 1x 64-bit to 2x 64-bit, effectively 128-bit. Quite simple and logical so far regarding the channels.

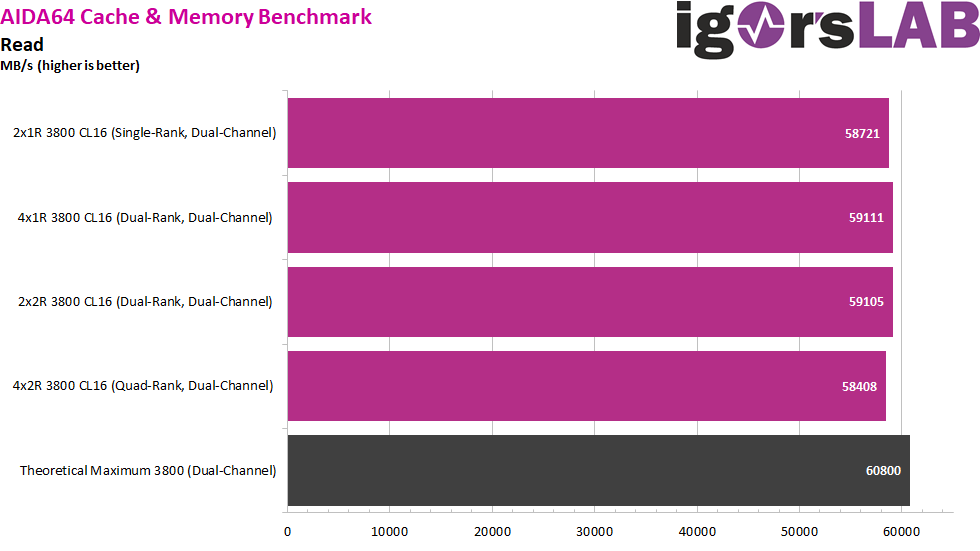

If we now use RAM at these two 64-bit connectors, it runs with a certain clock rate, e.g. 1900 MHz at DDR4-3800, which together with the factor 2 due to Double Data Rate (DDR) results in the theoretical bandwidth: 1900 MHz * 2 (DDR) * 64 bit * 2 (channels) = 486400 Mbit/s = 60800 MB/s (8 bit = 1 byte).

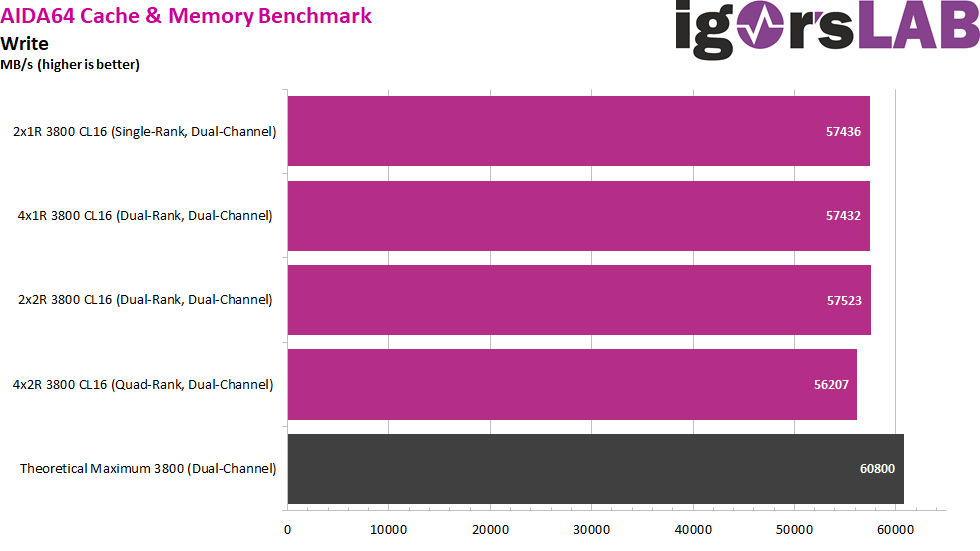

Mind you, this is only the theoretical bandwidth, because the main memory has to do other tasks than exchanging data, e.g. refreshing itself or waiting for cells to recover from a write operation. This inefficiency can be minimized somewhat by tighter timings, but never completely, even with the highest quality memory chips. We can see this quite nicely in the AIDA64 read and write tests, where we end up 5-10% below the theoretical maximum bandwitdh with all configs.

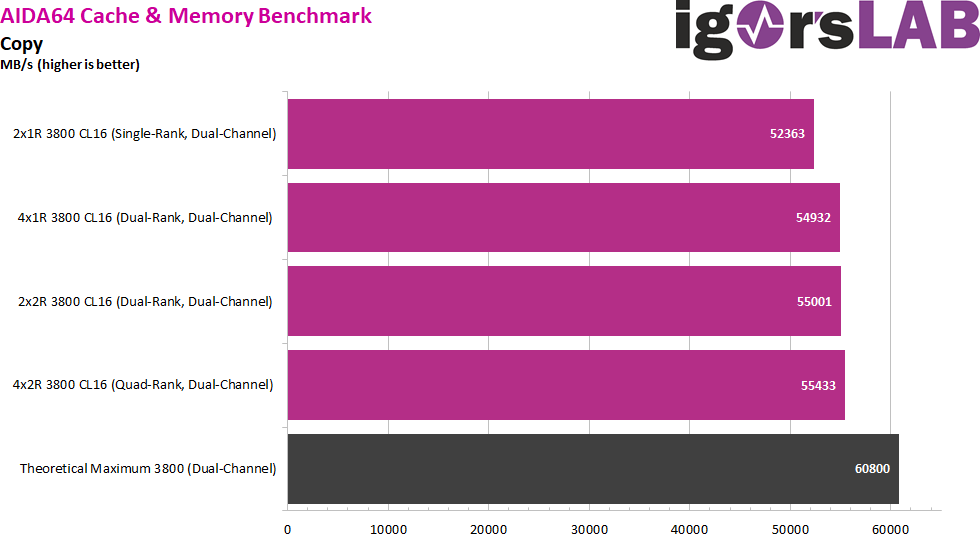

This is where multiple ranks come into play, because while the CPU only has one 64-bit connector per channel, on the memory side we can install multiple of these 64-bit connectors in the form of ranks. The theoretical bandwith remains the same, but effectively now the ranks can take turns doing work when one is busy with itself. So basically we only increase the efficiency of the 64-bit connectors of the CPU (channels) by distributing the work to several corresponding 64-bit connectors at the RAM (ranks). By the way, this alternating of the ranks is called interleaving and you might have encountered it in the BIOS before.

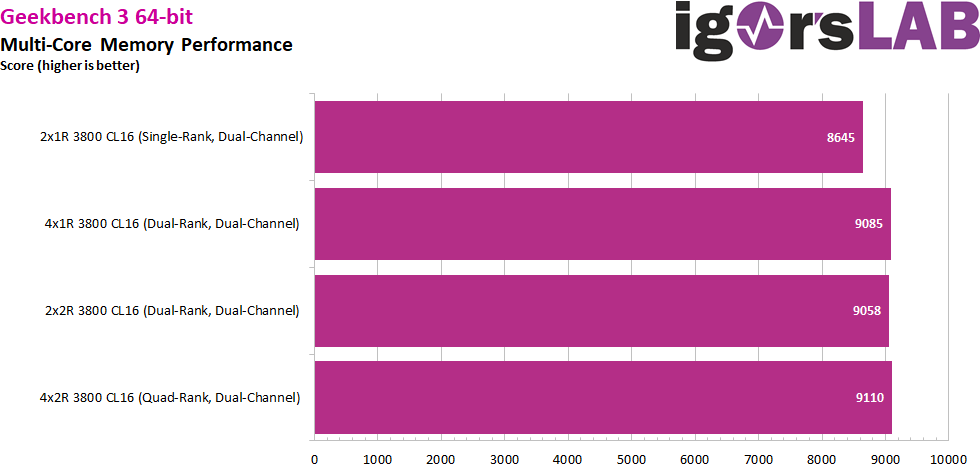

By increasing the number of ranks per channel, we ultimately improve the efficiency of the channel in question, especially for use cases with many rapidly sequenced instructions for memory. By the way, the CPU doesn’t care how many modules the ranks are distributed over per channel. In the AIDA64 Copy test, we see nearly identical bandwidth results in dual-channel, now only 10% away from the theoretical maximum, compared to 14% in single-rank. That doesn’t sound like much at first, but the effects can be quite noticeable depending on the application, as we’ll see especially in our Cyberpunk 2077 tests.

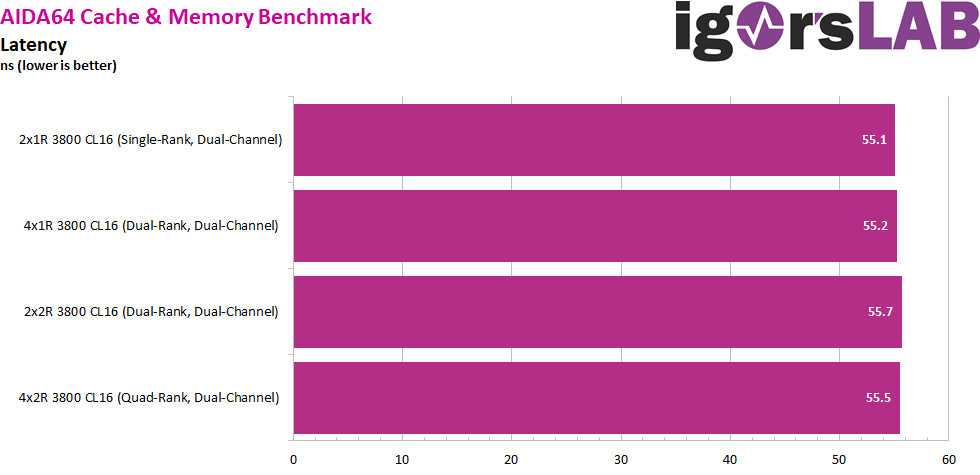

Now dual rank is not the end of the line, because there is also quad rank, i.e. 4 ranks per channel, and for servers octa rank, i.e. 8 ranks per channel, is not uncommon. At the same time, the gains through more ranks become increasingly less and less, because the theoretical bandwidth maximum of the channels remains a hard limit, “diminishing returns” so to say. Thus, we also see only 1 percentage point difference in the comparison of dual-Rank to quad-Rank in the AIDA64 copy Benchmark. By the way, the measured latency in our tests is almost unaffected by the number of ranks.

But even by using 4 modules or 4 ranks per channel, the maximum of channels of the CPU remains the same. So even if there are now 4 ranks in a channel, the dual-channel CPU still runs in dual-channel, not quad-channel. There are CPUs that support more than 2 channels, but these are more likely to be found in workstation or server market, such as the AMD Threadripper, AMD Epyc, Intel X299 etc.

But besides better performance there is another advantage of multiple ranks, namely more capacity. Instead of having to increase the RAM with its 64-bit width over the length by installing memory chips with more capacity, it is relatively easy to install several ranks of memory chips with the same smaller size. Depending on the type of memory, this can potentially save costs, both for the manufacturer and the consumer.

The only downside to having many ranks is that the CPU has to keep up with the increasing administrative workload and supplying all the ranks. With desktop CPUs, this usually manifests itself in the fact that the maximum possible clock speed decreases as the number of ranks increases, while server CPUs often have a fixed limit for the number of supported ranks per channel, which as an integrator you have to divide up as wisely as possible among the various RAM modules. We can observe such an effect of higher management overhead in the AIDA64 Write test, where the quad-rank configuration ends up noticeably behind the others.

So, as with so many things, it comes down to a good compromise. First you should fill all channels, then run them with the maximum possible clock and thus throughput, then tighten the timings as much as possible and only then install as many ranks as possible without negatively influencing the clock. And of course you should always install enough RAM for the respective application in the first place, so that the system doesn’t have to switch to the page file and the comparatively slow hard disk, because that is the total meltdown for the performance.

Kommentieren