Without wanting to spoil everyone’s joy with a hasty spoiler in the first sentence: AMD is also back in GPUs. And how! Nevertheless, despite all the euphoria about the enormous performance boost, one must remain fair and consider the entire pixel cosmos: performance (rasterization, DXR, compute), feature set, electrical as well as mechanical implementation and the drivers. This makes today’s article a bit more complex and also not easier, even if for various reasons not everything could be fully tested. But there is also a solution for such a thing: the Follow-Ups!

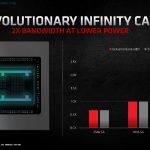

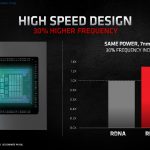

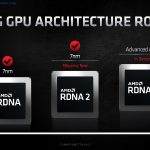

But back to the topic and the two main actors of today’s launch review: the Radeon RX 6800 XT and its smaller sister Radeon RX 6800. As is well known, the thick ship Radeon RX 6900 XT comes later. But what makes the two new cards so interesting for the end user? With RDNA 2 AMD introduces new power saving techniques, with the “Infinity Cache” they also want to enable higher memory bandwidths per watt, because the memory interface itself is rather narrow with 256 bit for efficiency reasons.

The new graphics cards can already cope with the new video codec AV1, they support DirectX 12 Ultimate for the first time and thus also DirectX Raytracing (DXR). With AMD FidelityFX, they also offer a feature designed to give developers more freedom in the choice of effects. Also included is Variable Rate Shading (VRS), which can save an immense amount of computing power if image areas that are not in the eye of the player anyway are smartly reduced in the display quality.

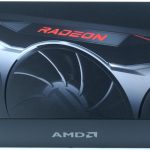

The Radeon RX 6800 XT and RX 6800 as reference design

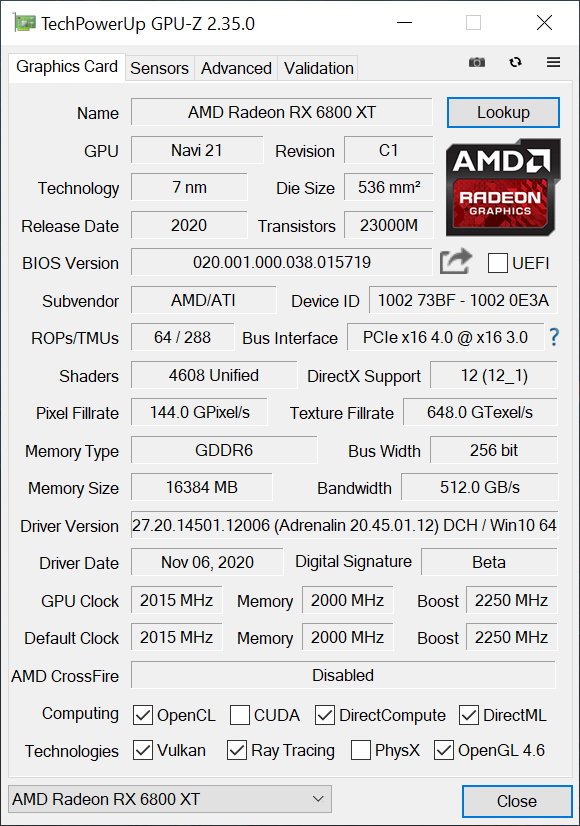

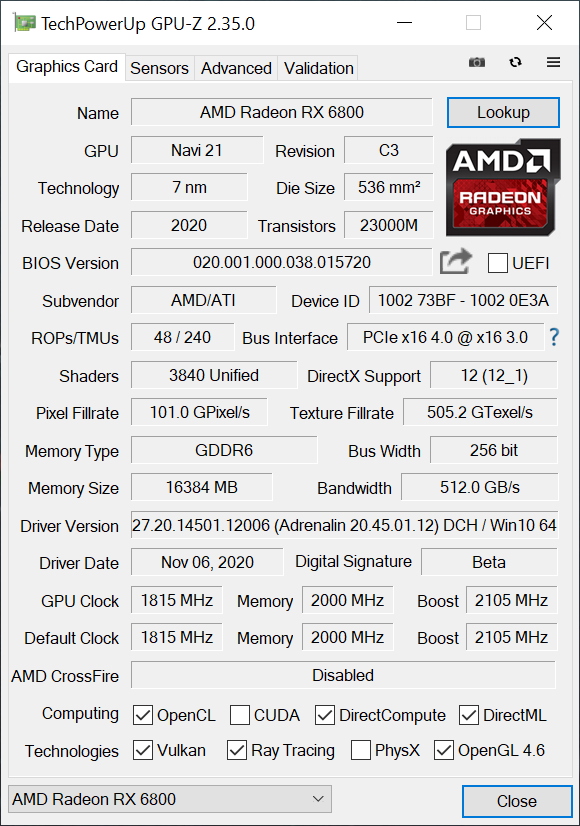

With 72 Compute Units (CU), the RX 6800 XT has 4608 shaders, while the RX 6800 has 62 CU or 3840 shaders. While the base clock of the RX 6800 XT is specified as 2015 MHz and the boost clock as 2250 MHz, the RX 6800 must manage with 1815 or 2105 MHz. Both cards use 16 GB GDDR6 at 16 Gbps, which is the result of 8 modules of 2 GB each. Also common are the 256-bit memory interface and the 128 MB Infinity Cache, which is supposed to solve the bandwidth problem.

The RX 6800 XT weighs 1501 grams, is 26.7 cm long, 12 cm high (11.5 cm installation height from PEG), 4.5 cm thick (2.5-slot design), whereby a backplate and the PCB with a total of four additional millimeters are added. The slot bezel is closed, carries 1x HDMI 2.1 and two DP connectors. In addition there is a USB Type C socket. The body is made of light metal, the Radeon lettering is illuminated and the whole thing is powered via two 8-pin sockets. More about this on the next page at the teardown.

The small RX 6800 weighs 1386 grams, is also 26.7 cm long, 12 cm high (11.5 cm installation height from PEG), 3.8 cm thick (2.5 slot design), whereby a backplate and the PCB with a total of four additional millimeters are added. The slot bracket is also closed and carries 1x HDMI 2.1 and two DP connectors. In addition there is a USB Type C socket. The body is also made of light metal, the Radeon lettering is illuminated in red and the whole thing is powered by two 8-pin sockets.

The two screenshots from GPU-Z provide information about the remaining data of both cards:

Ray tracing / DXR

At least since the presentation of the new Radeon cards it is clear that AMD will also support raytracing. Here one takes a path that differs significantly from NVIDIA and implements a so-called “Ray Accelerator” per Compute Unit (CU). Since the Radeon RX 6800 has a total of 72 CUs, this means that there are 72 such accelerators for the Radeon RX 6800XT, compared to 60 for the smaller Radeon RX 6800. A GeForce RTX 3080 comes with 68 RT cores, so nominally less at first. In the comparison of the smaller cards, it is 62 for the RX 6800 and 46 for the GeForce RTX 3070. However, the RT cores are organized differently and we’ll have to wait and see what can turn a lot against specialization here. So in the end it is first of all an apple and pear comparison.

But what has AMD come up with here? Each of these accelerators is initially capable of simultaneously calculating up to 4 beam/box intersections or a single beam/triangular cut per cycle. This is how the intersections of the rays with the scene geometry are calculated (analogous to the Bounding Volume Hierarchy), first pre-sort them and then return this information to the shaders for further processing within the scene or output the final shading result. However, NVIDIA’s RT cores seem to be much more complex, as I explained in detail at the Turing launch. What counts is the result alone, and that is exactly what we have benchmarks for.

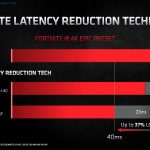

Smart Access Memory (SAM)

At the presentation of the new Radeon cards, AMD already showed SAM, i.e. Smart Access Memory – a feature that I have activated today in addition to the normal benchmarks, which also allows a direct comparison. But actually SAM is not Neuers, just verbally more beautifully packaged. Behind this is nothing else but the clever handling of the Base Address Register (BAR) and exactly this support must be activated in the substructure. With modern AMD graphics hardware, size-adjustable PCI bars (see also PCI SIG from 24.0.4.2008) have been playing an important role for quite some time, since the actual PCI BARs are normally limited to 256 MB, while the new Radeon graphics cards now offer up to 16 GB VRAM.

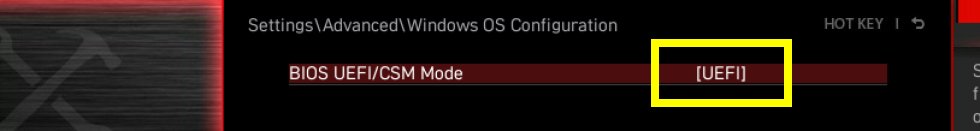

The consequence is that only a fraction of the VRAM is directly accessible to the CPU, which without SAM requires a whole range of bypass solutions in the so-called driver stack. This of course always costs performance and should therefore be avoided. So that’s where AMD comes in with SAM. This is not new, but must be implemented cleanly in the UEFI and activated later. This in turn only works if the system is running in UEFI mode and CSM/legacy is deactivated.

CSM stands for the Compatibility Support Module. The Compatibility Support Module is only available under UEFI and it ensures that older hardware and software also works with UEFI. The CSM is always helpful if not all hardware components are compatible to UEFI. Some older operating systems and the 32-bit versions of Windows cannot be installed on UEFI hardware. However, it is precisely this compatibility setting that often prevents the clean Windows variant required for the new AMD components from being installed.

Benchmarks and evaluation

For the benchmarks this time I chose 10 games very purposefully and weighted between old and new, as well as AMD or NVIDIA specific. Three of them are also additionally measured with DXR, but only in 1080p with regard to playable frame rates, which means that NVIDIA’s DLSS, which you could faintly include, but doesn’t make sense here because of the resolution, is also omitted. Therefore I measure the two Radeons once without and once with SAM over all games and resolutions, although it is currently as proprietary as NVIDIA’s DLSS. But at least it doesn’t have to be implemented in the games and is therefore always available, suitable hardware provided.

I covered each game on one page with a total of 6 graphics per resolution or setting. This is absolutely self-explanatory and I spare myself the text, which becomes obsolete because of all the graphics. Facts not words. For this there is a cumulative summary with a detailed explanation at the end. Efficiency and power consumption are also game-related and cumulative, plus the frame times and variances, because percentiles alone are not the final straw.

Test system and evaluation software

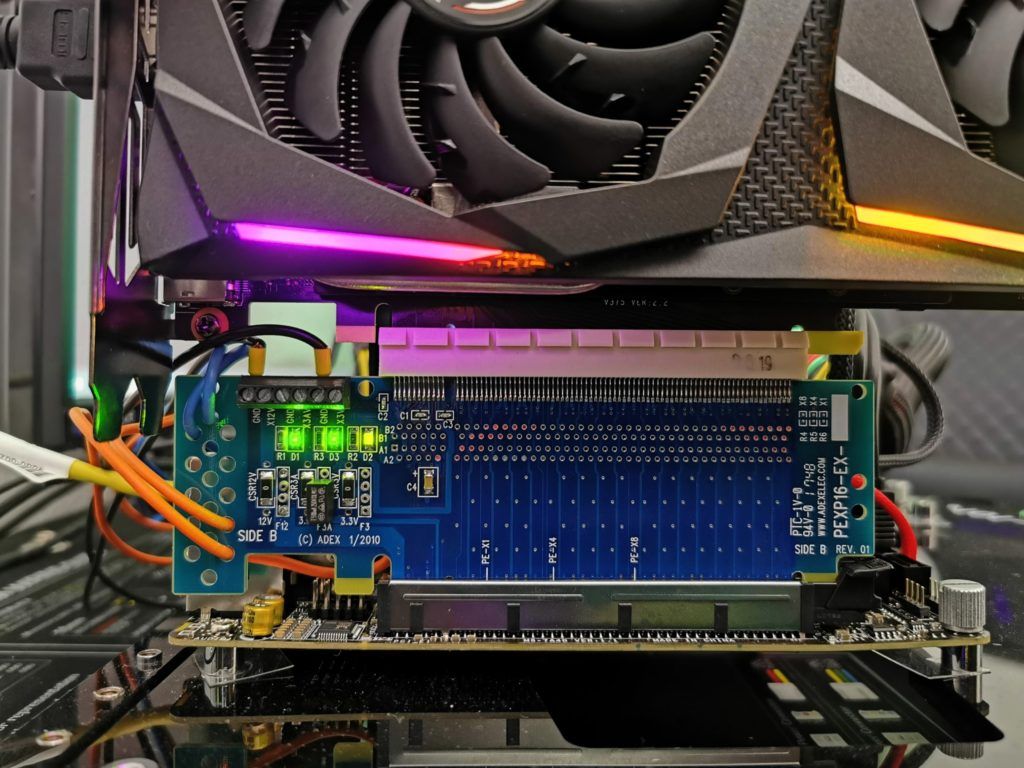

The benchmark system is new and is now completely based on AMD. PCIe 4.0 is of course mandatory. These include the matching X570 motherboard in the form of an MSI MEG X570 Godlike and the Ryzen 9 5950X, which is water-cooled and slightly overclocked. In addition, the matching DDR4 4000 RAM from Corsair in the form of the Vengeance RGB, as well as several fast NVMe SSDs. For direct logging during all games and applications, I use both NVIDIA’s PCAT and my own shunt measurement system, which makes it much more comfortable. The measurement of the detailed power consumption and other somewhat more complicated things is done in a special laboratory on two tracks using high-resolution oscillograph technology…

…and the self-created, MCU-based measurement setup for motherboards graphics cards (pictures below), where in the end the thermographic infrared images are also taken with a high-resolution industrial camera in an air-conditioned room. The audio measurements are then taken outside in my chamber (room-in-room).

The used software relies on my own interpreter including evaluation software as well as a very extensive and flexible Excel sheet for the graphical conversion. I have also summarized the individual components of the test system in tabular form:

| Test System and Equipment |

|

|---|---|

| Hardware: |

AMD Ryzen 9 5950X OC MSI MEG X570 Godlike 2x 16 GB Corsair DDR4 4000 Vengeance RGB Pro 1x 2 TByte Aorus (NVMe System SSD, PCIe Gen. 4) 1x 2 TB Corsair MP400 (Data) 1x Seagate FastSSD Portable USB-C Be Quiet! Dark Power Pro 12 1200 Watt |

| Cooling: |

Alphacool Eisblock XPX Pro Alphacool Eiswolf (modified) Thermal Grizzly Kryonaut |

| Case: |

Raijintek Paean |

| Monitor: | BenQ PD3220U |

| Power Consumption: |

Oscilloscope-based system: Non-contact direct current measurement on PCIe slot (riser card) Non-contact direct current measurement at the external PCIe power supply Direct voltage measurement at the respective connectors and at the power supply unit 2x Rohde & Schwarz HMO 3054, 500 MHz multichannel oscilloscope with memory function 4x Rohde & Schwarz HZO50, current clamp adapter (1 mA to 30 A, 100 KHz, DC) 4x Rohde & Schwarz HZ355, probe (10:1, 500 MHz) 1x Rohde & Schwarz HMC 8012, HiRes digital multimeter with memory function MCU-based shunt measuring (own build, Powenetics software) NVIDIA PCAT and FrameView 1.1 |

| Thermal Imager: |

1x Optris PI640 + 2x Xi400 Thermal Imagers Pix Connect Software Type K Class 1 thermal sensors (up to 4 channels) |

| Acoustics: |

NTI Audio M2211 (with calibration file) Steinberg UR12 (with phantom power for the microphones) Creative X7, Smaart v.7 Own anechoic chamber, 3.5 x 1.8 x 2.2 m (LxTxH) Axial measurements, perpendicular to the centre of the sound source(s), measuring distance 50 cm Noise emission in dBA (slow) as RTA measurement Frequency spectrum as graphic |

| OS: | Windows 10 Pro (all updates, current certified or press drivers) |

- 1 - Einführung und technische Details

- 2 - Teardown: Platine, Spannunsversorgung, Kühler

- 3 - Borderlands 3

- 4 - Control (+DXR)

- 5 - Far Cry New Dawn

- 6 - Ghost Recon Breakpoint

- 7 - Horizon Zero Dawn

- 8 - Metro Exodus (+DXR)

- 9 - Shadow of the Tomb Raider

- 10 - Watch Dog Legion (+DXR)

- 11 - Wolfenstein Youngblood

- 12 - World War Z

- 13 - Leistungsaufnahme und Effizienz im Gaming

- 14 - Leistungsaufnahme, Spannungen und Normeinhaltung

- 15 - Lastspitzen und Netzteil-Empfehlung

- 16 - Taktraten und Temperaturen

- 17 - Lüfter und Geräuschemission ('Lautsärke')

- 18 - Übersicht, Zusammenfassung und Fazit

Kommentieren