The problem here, as always, is of course the desire, ability and permission to write details. The fact that I took myself out of the race for a few days is probably also due to the fact that a lot of things have become a very difficult balancing act and tightrope walk. To publish unreflectedly everything that is leaked on Twitter, or better, probably rather speculated, is not quite mine, not to mention completely. The presumed specifications to the GeForce RTX 3090, RTX 3080 and a new Titan RTX are in the sum and in the comparison to the own knowledge state however rather wishful thinking than reality, particularly since much of it was already assumed and published by other sources, also me.

What’s this about? The speculation on “KatCorgi” revolves around the GA102. So there will be a new Titan RTX (GA102-400-A1), a GeForce RTX 3090 (GA102-300-A1) and a GeForce RTX 3080 (GA102-200-Kx-A1). As a flagship, the new Titan is to be based on 5,376 shader units and thus 84 SMs. The Turing-based Titan RTX offers 4,608 shaders. The memory expansion should again be 24 GB and set to GDDR6X, the memory speed is specified as 17 Gbps. On a 384-bit interface, this would result in a bandwidth of 816 GB/s, compared to the 672 GB/s of the current Titan RTX. The shader numbers are pure speculation and that’s exactly why I won’t go into it any further.

2nd Gen NVIDIA TITAN

GA102-400-A1 5376 24GB 17Gbps

GeForce RTX 3090

GA102-300-A1 5248 12GB 21Gbps

GeForce RTX 3080

GA102-200-Kx-A1 4352 10GB 19Gbps— KatCorgi (@KkatCorgi) June 19, 2020

What’s wrong with that?

Currently, many of my sources have been talking about a SKU10, SKU20 and SKU30 for a long time, with the SKU10 being the largest and strongest chip. However, only the SKU10 and the SKU30 have been confirmed from a technical point of view, and their data correspond roughly to the data in the current “Leak”, while it is hardly possible to obtain validated data for the SKU20. Logical, actually. If you’re trying to imply a little malicious glee and caution on NVIDIA’s part, you’re more likely to believe in a green sandwich for Big Navi.

I’m pretty sure that a new commercial RTX series will be launched in August for the this time virtual Siggraph. The board for the consumer offshoots, also known as PG132 (PG133 for the Founders Editions) is designed for the SKU10 as the strongest board in the maximum configuration and can then be “slimmed down” towards the bottom. NVIDIA’s information on the competitor has always been very valid and frighteningly good, so you’ll probably want to keep the lid on from the start with the SKU10, while you round it off from the bottom with the SKU30.

The previously missing SKU20 can now be handled flexibly. Since NVIDIA has long relied on flexible hardware straps to “crop” the GPU, this chip could be “designed” as a special SKU20 after the successful Navi launch without much effort. It is possible to choose one or no memory controller, with the logical consequence for the SM and thus the CUDA cores. NVIDIA is therefore in the very comfortable position of being able to wait and see what AMD does.

The fact that you can immediately start using the SKU10, i.e. the Titan RTX II or the work designation RTX “3090” goes onto the market, certifies however finally also indirectly that AMD could release something quite competitive there.

What is the follow of a maximum 350 watt TGP and GDDR6X?

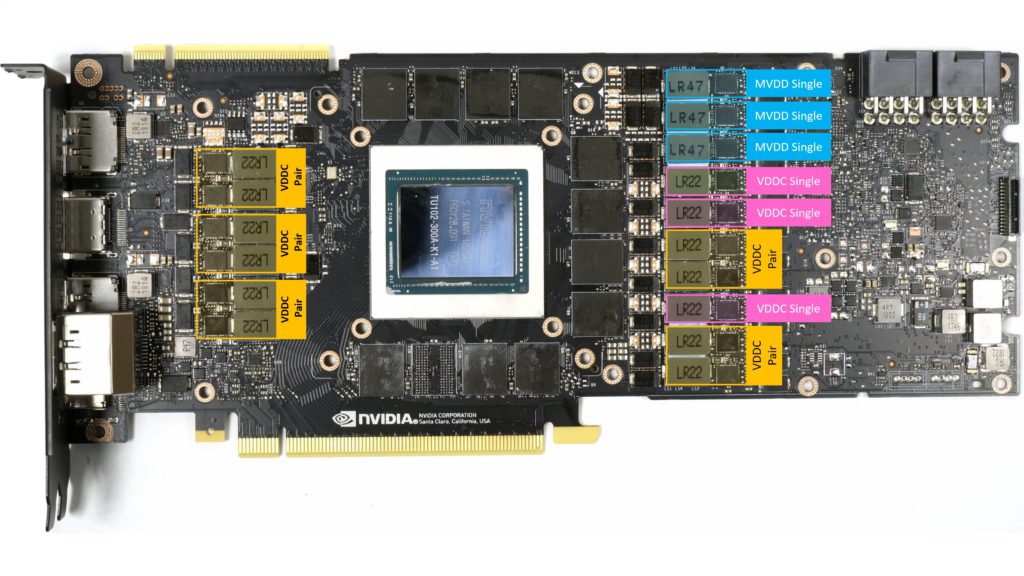

I had already calculated that the 350 watt maximum TGP for the SKU10 is quite plausible. With up to 60 watts for the memory in full configuration, this would mean that you would then even need 3 phases for the power supply. Depending on the SKU version and RAM configuration, one can therefore assume a minimum of 2 and maximum of 3 phases. With the GPU, one might consider the 8 phases of the Turing generation as set. In view of the overall high TGP of the SKU of up to 230 watts, I would then however guess that there are two parallel voltage converter circuits per phase, so that you would probably get a maximum of 16 voltage converter circuits for the SKU10.

This makes a possible 16+3 design for the maximum configuration. But to split that up, you would need both sides down the memory, and you’d be right back between the video ports and the memory where some people want to see the Co-Pro. Which elegantly closes the circle of the impossible again. With the RTX 2080 Ti no memory is left beside the GPU, but below it (picture above). NVIDIA should certainly not make this mistake again (Space Invaders).

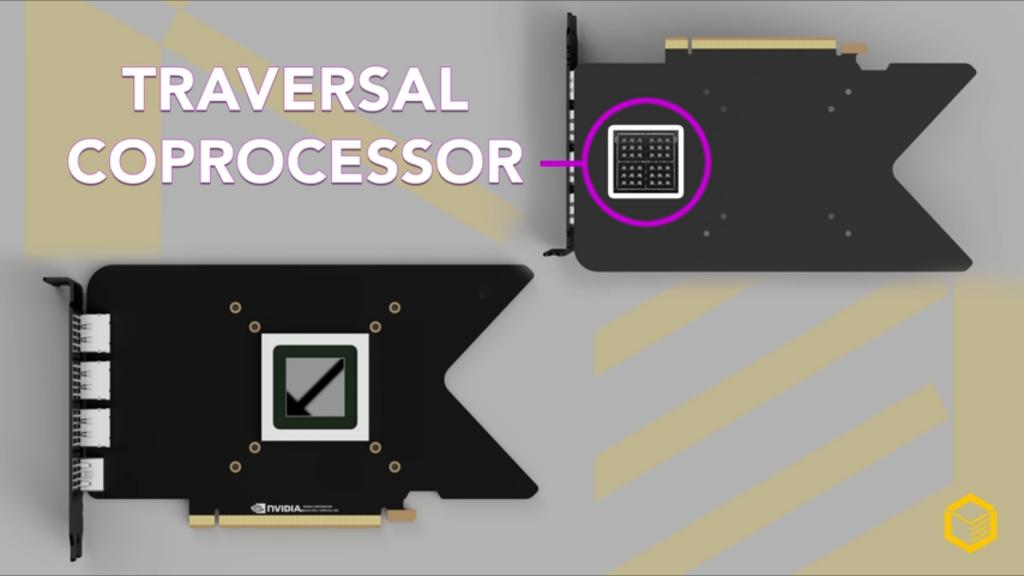

The co-processor as a wonder weapon?

You don’t even have to risk possible NDAs to see all this as some kind of comedy. If you follow the usual and proven board designs of the last years, this special RT chip (how should it be connected as performant as needed?) would be behind the necessary voltage converters for the GPU. Above all, it is simply electrically and thermally impossible to position them. that’s really all there is to it. You could also do without the picture, but I’m posting it anyway, to be able to put what I’ve said into perspective.

Interim conclusion

I currently only see the SKU10 and SKU30 as set and I also suspect that we will only see two cards at the launch in a few months. The third card with the SKU20 will probably not be thrown on the market until the performance of the new Navi cards is really known. Therefore the speculation about this RTX “3080 Super/Ti” is also abundantly superfluous, because both a 384-bit and a 352-bit interface are technically within the realm of possibility.

The fact that this variant is still so little known, unlike the others, reinforces the theory of NVIDIA’s wait-and-see approach all the more.

Kommentieren