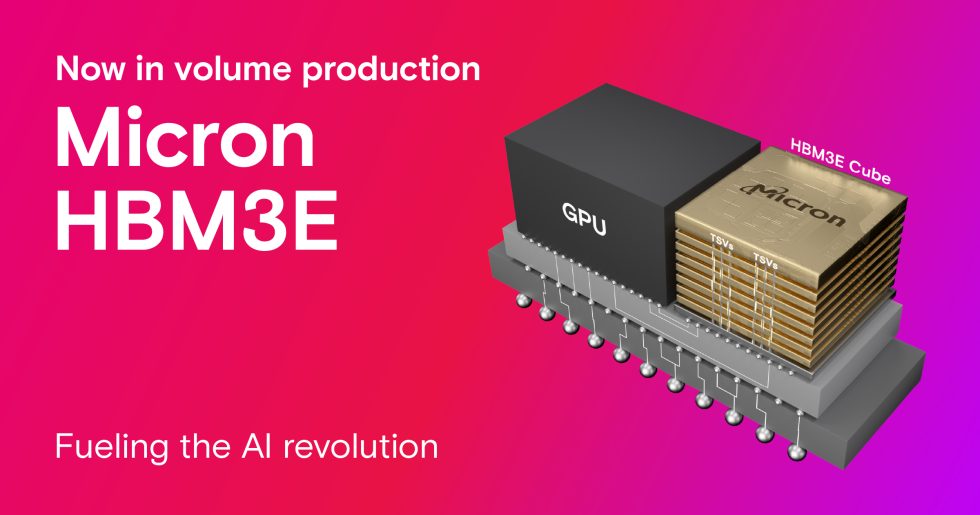

Micron Technology recently announced that it has started mass production of its High Bandwidth Memory 3E (HBM3E). Micron’s 24 GB 8H HBM3E will become a component of the NVIDIA H200 Tensor Core GPUs.

The NVIDIA H200 series with Micron’s HBM3E is scheduled to ship in the second quarter. According to Micron, this HBM3e memory will consume 30% less power and also offer a number of other highlights. The pin speed is said to offer more than 9.2 gigabits per second and enable a memory bandwidth of more than 1.2 terabytes per second. The aim is to achieve lightning-fast data access for AI accelerators, supercomputers and data centers. This should make it possible to train massive neural networks. Inferencing tasks should also be greatly accelerated.

Sumit Sadana, Executive Vice President and Chief Business Officer at Micron, names the three pillars of the HBM3E milestone. This is characterized by market leadership, industry-leading performance and a differentiated energy efficiency profile. He also said that AI workloads are heavily dependent on memory bandwidth and capacity. His company is therefore ideally positioned to support the significant growth in AI. The company can achieve this through its leading HBM3E and HBM4 roadmap as well as its comprehensive portfolio of DRAM and NAND solutions for AI applications.

The HBM3E design is based on its 1-Beta technology, advanced through-silicon via (TSV) and other innovations that enable a differentiated packaging solution. Micron, a recognized leader in memory for 2.5D/3D stacking and advanced packaging technologies and a partner of TSMC’s 3DFabric Alliance, is actively shaping the future of semiconductor and system innovation.

As if that were not enough, Micron plans to launch the 36 GB 12-High HBM3E in March 2024. This is expected to have a performance of more than 1.2 TB/s and also deliver high energy efficiency. Micron is also a sponsor of the NVIDIA GTC, a global AI conference that begins on March 18. At this conference, the company intends to report more about its industry-leading AI memory portfolio and its roadmaps.

Source: Micron

2 Antworten

Kommentar

Lade neue Kommentare

Mitglied

Mitglied

Alle Kommentare lesen unter igor´sLAB Community →