What is really behind the information about Thermal Design Parameter (TDP) or GPU Power and Total Board Power (TBP) and Total Graphics Power (TGP) on AMD and Nvidia graphics cards? And who then adheres to the self-imposed guidelines? There are already some articles on the subject that deal more or less in depth with this subject. But what's really the point?

The trigger for this article, by the way, was the last circulating image of an apple and pear comparison from Nvidia's PR film department (which I will also refute in the end). But always nice in turn, because as always, as always, it is only at the end that the bill is billed.

For more than 5 years now, I have been measuring the power consumption of graphics cards in more and more detail, often sitting for hours before launches on board analyses and also doing a lot of "reverse engineering" because either documents are simply missing or the person concerned is simply missing. Confidential is. Nvidia in particular is almost exemplary in this respect and it is always a small motivation to get behind the thoughts of the layout erasers and developers.

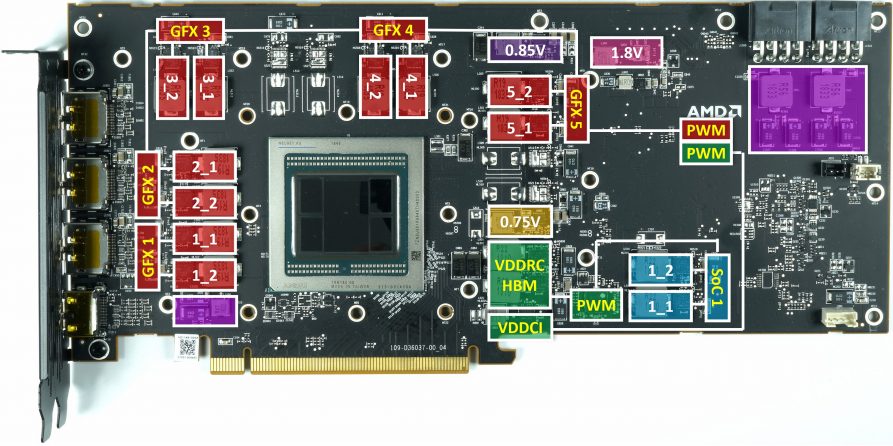

If we compare both circuit assignments, it is of course also noticeable that both manufacturers have very different approaches and that a direct comparison of the individual sub-areas is rather difficult than easy. But I'll get to that in a while.

The power consumption of the entire card (TBP and TGP)

This is still quite easy to measure and check, and I have also written countless articles on this subject. However, what completely distinguishes Nvidia and AMD is the control and control frenzy of Nvidia graphics cards, where the respective power target (default power limit) and the maximum power limit (Max Power Limit) are stored in the firmware. Are. In the worst case, Default Power Limit and Max Power Limit are identical, then the power increase controller is no longer available in the usual overclocking tools

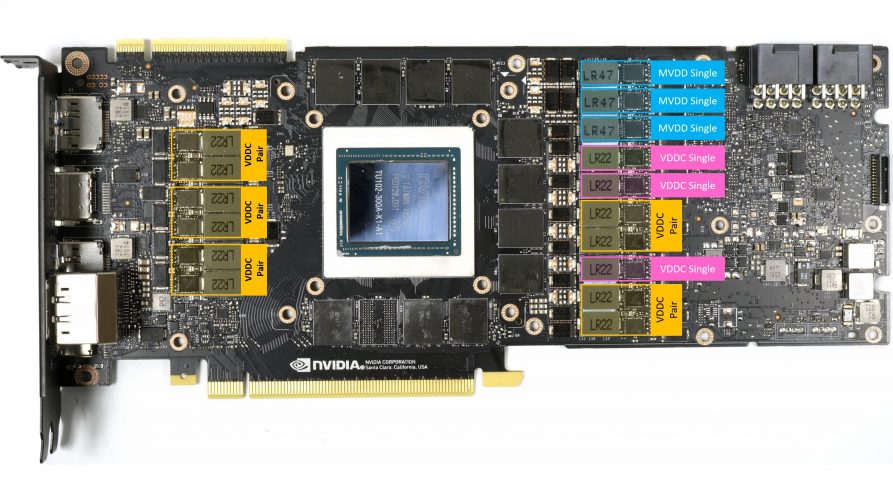

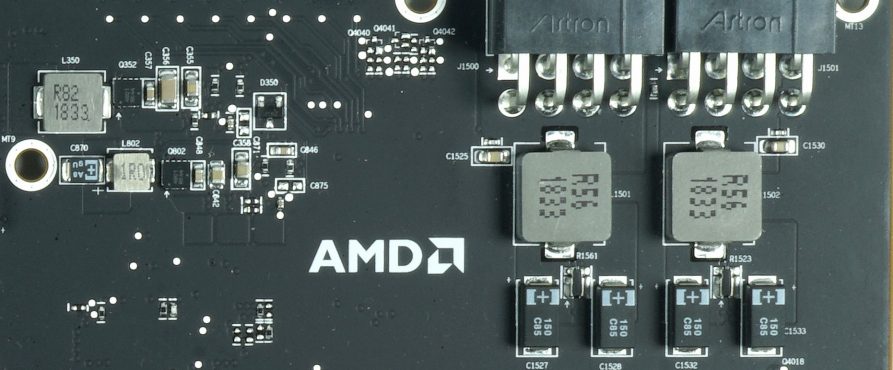

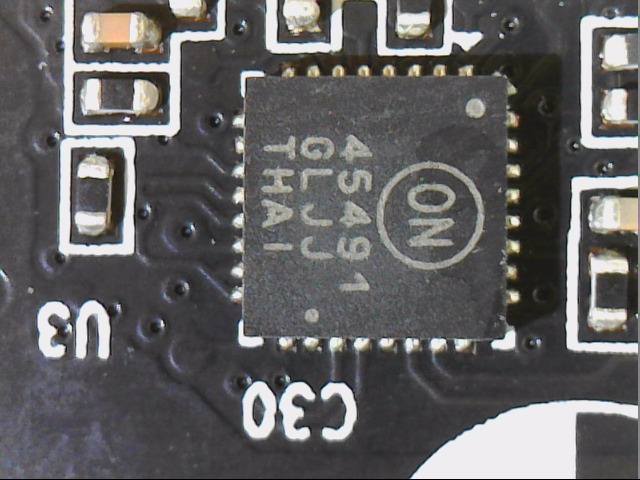

In contrast to AMD, Nvidia monitors all 12-volt inputs meticulously by means of a special monitoring chip, which measures both the voltage behind and before the shunt (very low-impedance resistance) and thus also evaluates the voltage drop longitudinally above this shunt. calculated from this the current flow). Since there is such a shunt in every 12-volt supply line, the exact power consumption of the board can be measured at 12 volts and can also be used for the internal limitation of the power consumption. We see on the left a shunt with longitudinal coil for input smoothing and on the right an NCP45491 from On Semi for monitoring the voltages and currents.

Exactly this value determined here is never exceeded (can be) in practice, because this is ensured by the very attentive and fast regulating firmware. Nvidia makes it a little easy, because it is assumed that current graphics cards only use the 12 V rails extensively, but this circumstance is then taken advantage of by certain graphics card manufacturers in order to effectively supply individual Circuit blocks via the 3.3 volt rail to allow more air for the GPU. And if, as with the MSI GeForce GTX 1650 Gaming X, it's just under 5 watts, because you don't want to blame things like RGB and Gedöns on the graphics. But small cattle also make crap.

What many do not consider: Fans, RGB floodlights, microcontrollers and displays are also included in this calculation. The more colorful and shrill such a gaming protz comes along, the less power is available to the GPU! If you then invest 10 watts (and) more in the full howling fans, you may gain a boost step through the then lower temperature, but it is precisely this power that unconsciously robs the GPU of this power. You can see this very nicely with water-cooled cards (Custom), which have been deprived of any cooling and lighting. In places I was able to measure over 15 watts of savings, which of course benefit the GPU without having to raise the power limit manually. This is like heating in an electric car: those who freeze roll longer.

AmD's Radeon cards do not have such a limit. The power limit controller (percentage) refers to the GPU and its power consumption and all the peripheral ballast is nicely left out. You can see that now as you like, but it at least leaves more room for the end user and the faction of lighting fetishists and fan worshippers.

We can already see from the handling of the TBP that they are actually not as narrow for the Radeon cards as with Nvidia. If the peripherals, including fans, remain economical, the GPU may also grab more at Nvidia, but otherwise not. With AMD, the GPU itself is monitored (rather generously) and the rest of the board is allowed to suck on the power cable, as is popular with small mammals. Jesuit Boarding School vs. Waldorf School Association.

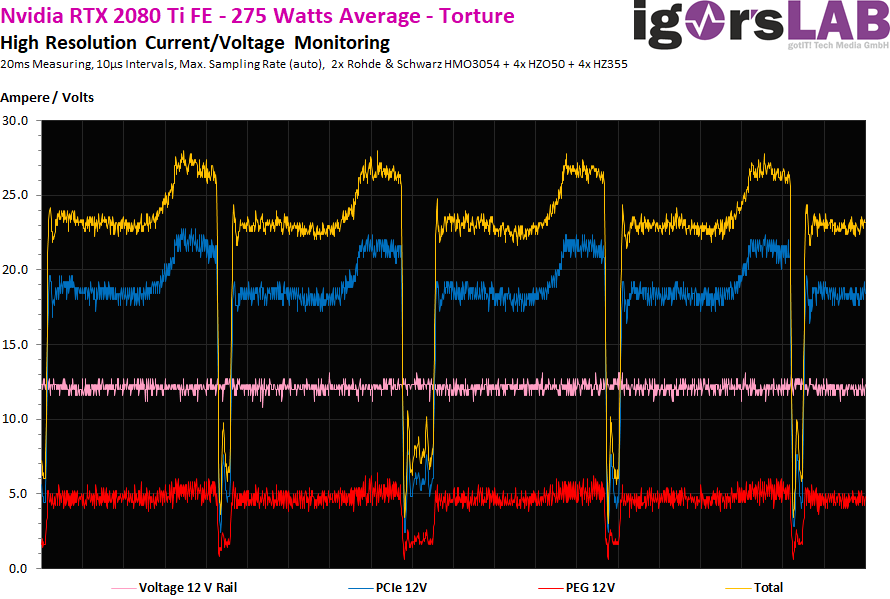

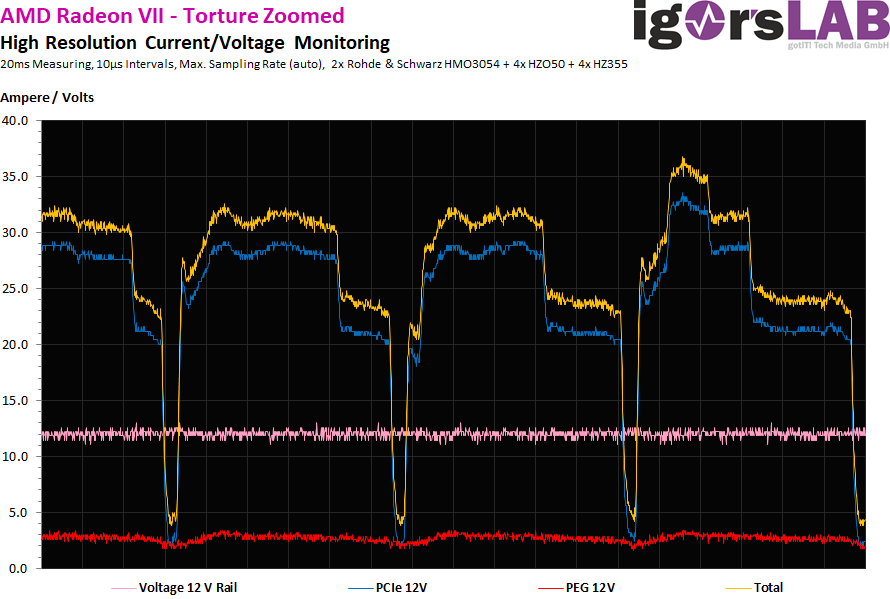

The restrictions and the consequences can be seen very nicely in the stress test. With the Nvidia cards, the control is visibly faster, but also much more restrictive. We see the current increase, which is then abruptly and extremely slowed down from a certain threshold. The Valley is also a tick longer than the Radeon 7. We also see that the GeForce even affects the motherboard slot (red curve).

The radeon VII regulation is somewhat more timid. First of all, a slight reduction takes place and if this still does not help, because the load is constant during the stress test, then it is then regulated for a short time. The motherboard slot remains virtually untouched, as AMD feeds the Radeon VII GPU only from the two 8-pin jacks.

But even with the voltage converters, Nvidia's control compulsion knows no end and so the larger GeForce RTX are completely covered with an almost perfect monitoring. But that's on the next page.

Kommentieren