In an interview with Tom’s Hardware, AMD’s CTO Mark Papermaster gave some insights into the company’s future plans. These include hybrid chip designs, higher core counts, and the use of artificial intelligence (AI) in both chip design and manufacturing. One important aspect Papermaster mentioned is hybrid chip designs. This is a combination of different types of computing cores on a single chip. This could mean that in the future AMD will develop CPUs that contain both powerful CPU cores for general tasks and specialized accelerators for specific applications. Such hybrid designs could further improve the efficiency and performance of AMD processors.

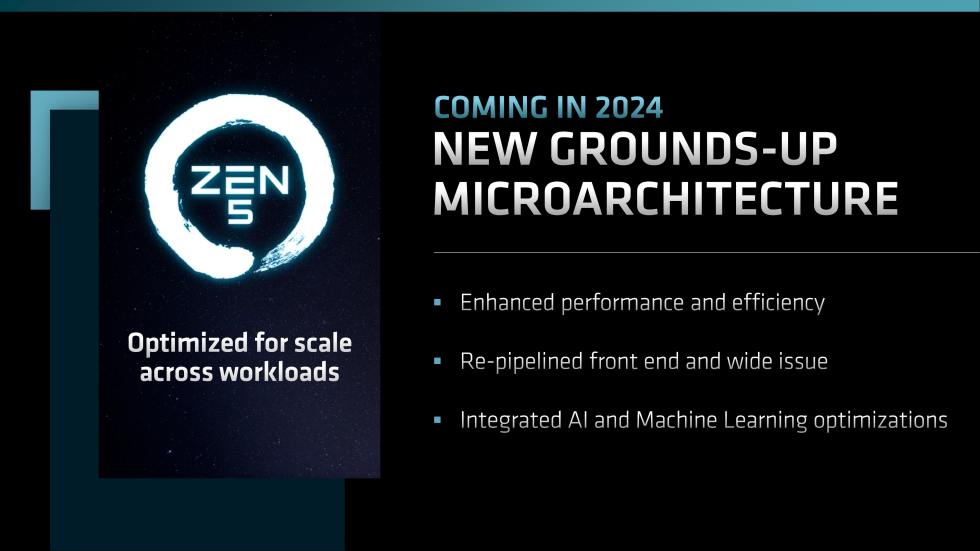

Mark points out that we have reached a point where a single chip is no longer sufficient to meet all requirements. Especially in the server segment, the company offers an extensive selection of solutions in its Zen 4 EPYC series. These include the classic Zen 4 Genoa, the Genoa-X with 3D V-Cache, Bergamo in various Zen 4C variants, and Siena for TCO and energy-optimized platforms. According to rumors, the upcoming Zen 5 and Zen 5C series will offer an even wider range of EPYC products.

AMD plans to vary not only the core density in future processors like Zen 4 and Zen 4C, but also the type of cores themselves. This approach is similar to what Intel and Apple are also doing with their current CPU generations. Powerful cores are combined with energy-efficient cores to achieve maximum efficiency. By using this hybrid approach, AMD could also stack multiple 3D layers that either contain cache or use specialized accelerators for different workloads to ensure optimal performance.

AMD has confirmed that technology enabling higher core counts will continue to advance. However, the company stresses that this is not the only path that future chips will take. While increasing the number of cores may be important for some customers, other customers may need the same number of cores but additional acceleration capabilities, as mentioned earlier. Mark confirms that the current Ryzen 7040 CPUs give a taste of this hybrid technology and that we will see more of it in the future.

But what you’ll also see is more variations of the cores themselves, you’ll see high performance cores mixed with power efficient cores mixed with acceleration. So where, Paul, we’re moving to now is not just variations in core density, but variations in the type of core, and how you configure the cores. Not only how you’ve optimized for either performance or energy efficiency, but stacked cache for applications that can take advantage of it, and accelerators that you put around it.

When you go to the data center, you’re also going to see a variation. Certain workloads move more slowly, you might be having a business where you haven’t yet adopted AI and you’re running transaction processing, closing your books every cycle, you’re running an enterprise, you’re not in the cloud, and you might have a fairly static core count. You might be in that sweet spot of 16 to 32 cores on a server. But many businesses are indeed adding point AI applications and analytics. As AI moves from not only being in the cloud, where the heavy training and large language model inferencing will continue, but you’re going to see AI applications in the edge. And you know, it’s going to be in enterprise data centers as well. They’re also going to need different core counts, and accelerators.

I really think I can sum it up by saying we see the technology continuing to enable core counts going forward, but that is not the sole path to meeting customer needs. It has to be application dependent, and you have to be able to provide customers with the kind of diversity of computation elements they need. And that CPUs, and different types of CPUs, along with accelerators. And you need to give them flexibility as to how they can figure that solution based on the applications they are running.

Paul Alcorn: So, it’s probably safe to say that a hybrid architecture will be coming to client [consumer PCs] some time?

Mark Papermaster: Absolutely. It’s already there today, and you’ll see more coming.

AMD CTO, Mark Papermaster (via Tomshardware)

Mark explained that AMD is already using software to help with chip design, and that this can help create better designs. However, he stressed that this technology will not necessarily replace the work of human engineers. NVIDIA has made similar comments in the past, and is also using AI to implement advanced techniques for the development of their next-generation chips. NVIDIA is also looking to improve the production of these chips by streamlining and speeding them up using AI.

The short answer to your question is, we’re going to solve all of those constraints and you’ll see more and more generative AI used in the very chip design process. It is being used in point applications today. But as an industry, over the next couple of years, next really one to two years, I think that we’ll have the proper constraints to protect IP and you’re going to start seeing production applications of generative AI to speed up the design process.

It won’t replace designers, but I think it has a tremendous capability to speed up design.

And will it speed future chip designs? Absolutely. But we have a few hurdles that we have to get our arms around in the short term.

AMD CTO, Mark Papermaster (via Tomshardware)

AMD has already stated that AI is its top strategic priority. With hybrid designs coming, it seems like AI will play a significant role for AMD in the future. The company has the potential to become one of the leading names in AI, and we look forward to seeing what it has to offer in the coming years.

Source: WccfTech

1 Antwort

Kommentar

Lade neue Kommentare

Mitglied

Alle Kommentare lesen unter igor´sLAB Community →