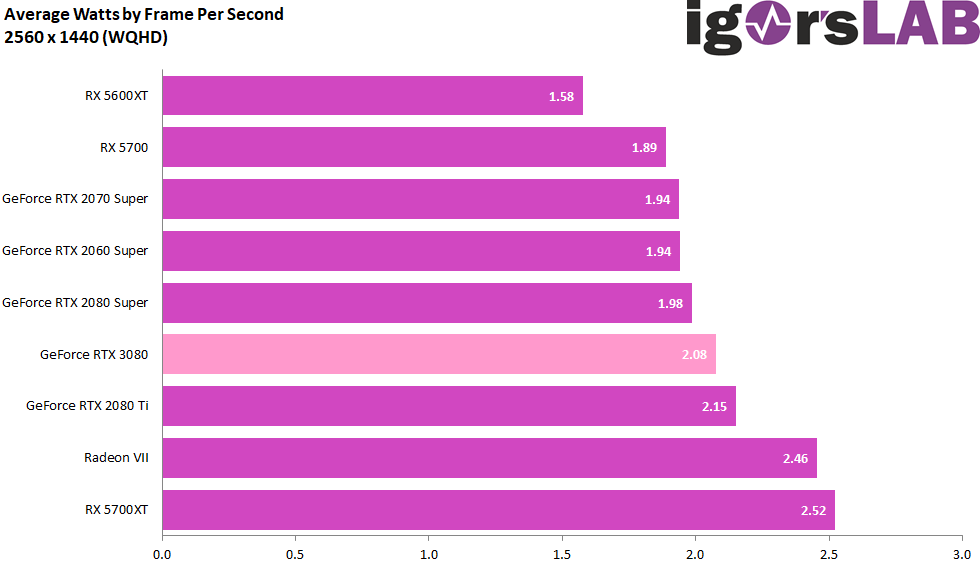

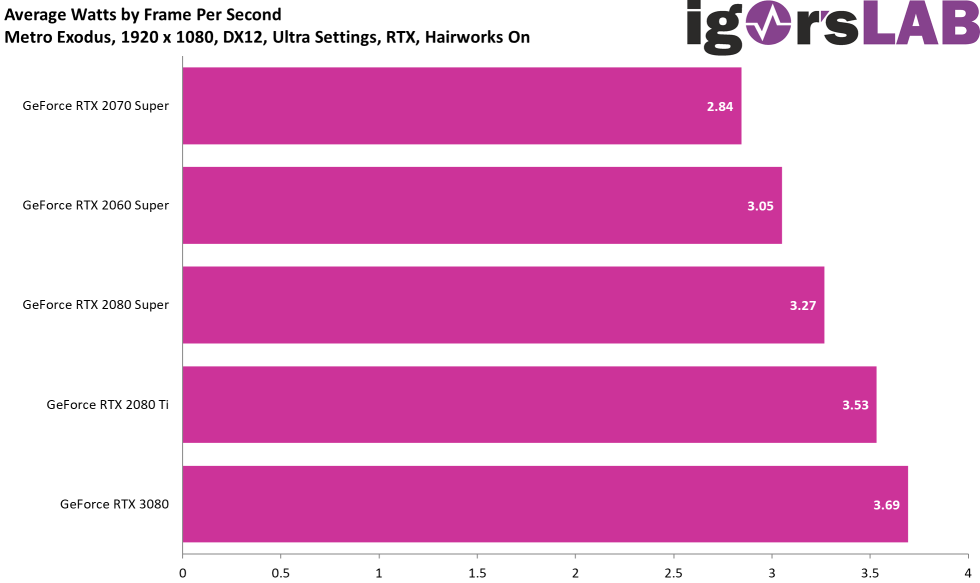

In order to be able to assess the efficiency really cleanly, I also measured the power consumption in detail for each individual benchmark, whose results we already know, which we could also see in detail on the previous page. But what can we expect if we now put the two values in a meaningful relationship to each other? How many watts of power consumption does a single frame per second cost me (more or less)? The realization is quite astonishing.

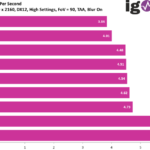

Games like Horizon Zero Dawn and Wolfenstein Youngblood, which also scale particularly well with the GeForce RTX 3080, are the best indicator of how things are going when they’re going, starting with WQHD. I only included World War Z as a game because it guarantees the CPU limit in any case. And exactly then, but only then, the GeForce RTX is unfortunately amply punished. It’s like being in second gear and driving 50 at 50 km/h through the city. Cheers to the tank or battery, but it’s not the card’s fault.

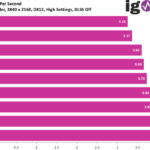

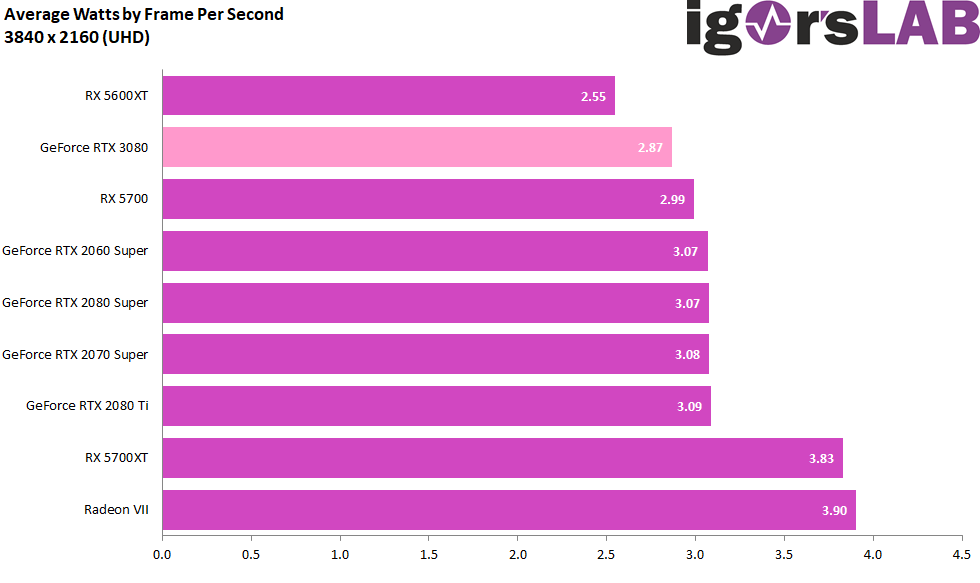

You can now add this up for all games and show a cumulative result:

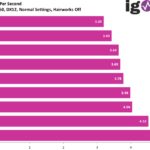

In Ultra-HD, this brake is omitted except for World War Z and Assasin’s Creed Odyssey and lo and behold, the GeForce RTX 3080 is always on the front places and in some games it even clearly hangs the rest. By the way, Assassin’s Creed Odyssey wouldn’t be an issue either, if you played at “Extreme High” in Ultra-HD. Then the whole thing looks completely different again, because the CPU is not driven into madness and sagging. By the way, also the older Turing maps do not look too bad.

Here again, I put it all together and lo and behold, the GeForce RTX 3080 suddenly turns out to be quite efficient Ultra-HD, because the rest simply fails in the field. And with the Radeon RX 5600 you won’t win anything except a green ribbon at the sweet spot, because everything is hardly playable in a sensible way.

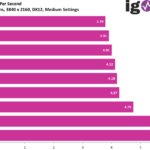

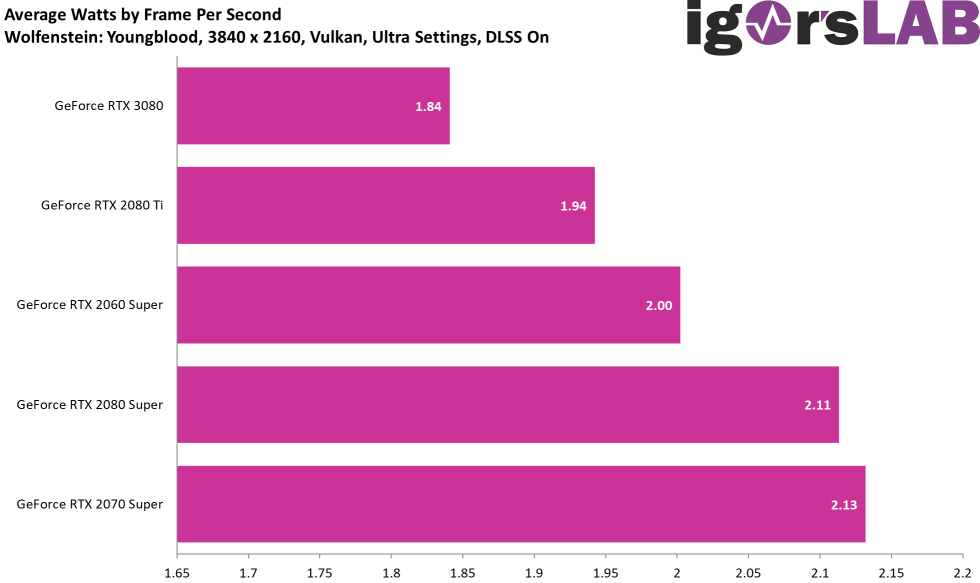

Save energy with DLSS? It works!

Let’s do a little experiment. In the gallery above at Ultra-HD you can post Wolfenstein Youngblood. Now let’s compare the whole thing with the values I could measure for Ulktra-HD, but with DLSS. While the GeForce RTX 3080 without DLSS still had 2.35 watts per FPS that had to be expended, it’s now only 1.84 watts, so only 78.3%. This gain is of course not due to a decreasing power consumption at identical FPS, but rather to the increased graphics performance at almost the same power consumption.

Corresponding articles I have already published show, however, that by using a suitable limiter you can reverse exactly this effect in the direction of power consumption. if you limit the run with DLSS to the FPS number without DLSS, the power consumption drops by almost 20%.

- 1 - Introduction, Unboxing and Test System

- 2 - Teardown, PCB analysis and Cooler

- 3 - Gaming Performance: WQHD and Full-HD with RTX On

- 4 - Gaming Performance: Ultra-HD with and without DLSS

- 5 - FPS, Percentiles, Frame Time & Variances

- 6 - Frame Times vs. Power Comsumption

- 7 - Workstation: CAD

- 8 - Studio: Rendering

- 9 - Studio: Video & Picture Editing

- 10 - Power Consumption: GPU and CPU in all Games

- 11 - Power Consumption: Efficiency in Detail

- 12 - Power Consumption: Summary, Transient Analysis and PSU Recomendation

- 13 - Temperatures and Thermal Imaging

- 14 - Noise and Sound Analysis

- 15 - NVIDIA Broadcast - more than a Gimmick?

- 16 - Summary, Conlusion and Verdict

Kommentieren