Overload at high FPS numbers on all cards

Now let’s move on to what many colleagues have unfortunately lumped together with the fan problem, even though it’s pure nonsense. Because the behavior of current graphics cards at exorbitantly high FPS numbers (e.g. in menus) and an emergency shutdown of the card (power good) or the triggering of the OCP in the PSU is anything but new. Again, of course, the values are more extreme in “New World” than usual, so it’s also more noticeable. It’s not new, though, and it’s certainly not game-dependent. Amazon’s “New World” is a closed beta and as such, of course, should be taken with a grain of salt when it comes to stability and potential bugs. Distributing a game without a limiter is negligent and downright stupid, but you always have to expect something like that in a beta as a tester. So from that point of view, you also have to distribute the pity and blame a little differently.

Which brings us to the topic at hand. I won’t repeat myself now, nor will I go through the functional scheme of Power Tune or Boost again. That would be boring and maybe even a bit confusing for non-technical people. Nevertheless, we need to talk about monitoring again. AMD and NVIDIA take a completely different approach here, albeit with similar results. Simplified: While AMD monitors the power consumption of the GPU and memory controller directly at the power gates, NVIDIA controls all external 12-volt supply lines in their entirety. With all the advantages and disadvantages.

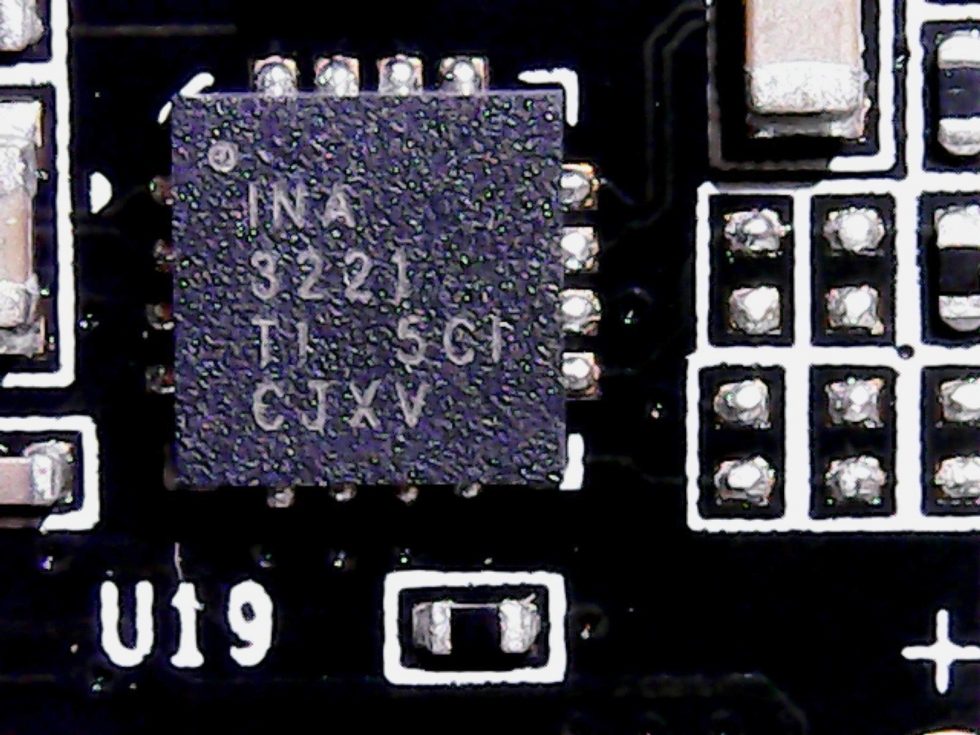

Until Pascal, the very slow INA3221 from Ti was almost always used for this purpose, which allowed monitoring in coarser ms intervals. But with ever faster load changes due to the ever faster cards, one was already faced with big problems with Pascal as to whether the OCP (or OPP) could still trigger in time. So these chips already reached their limits more and more often and there were already some problems with too fast load changes or too high FPS numbers.

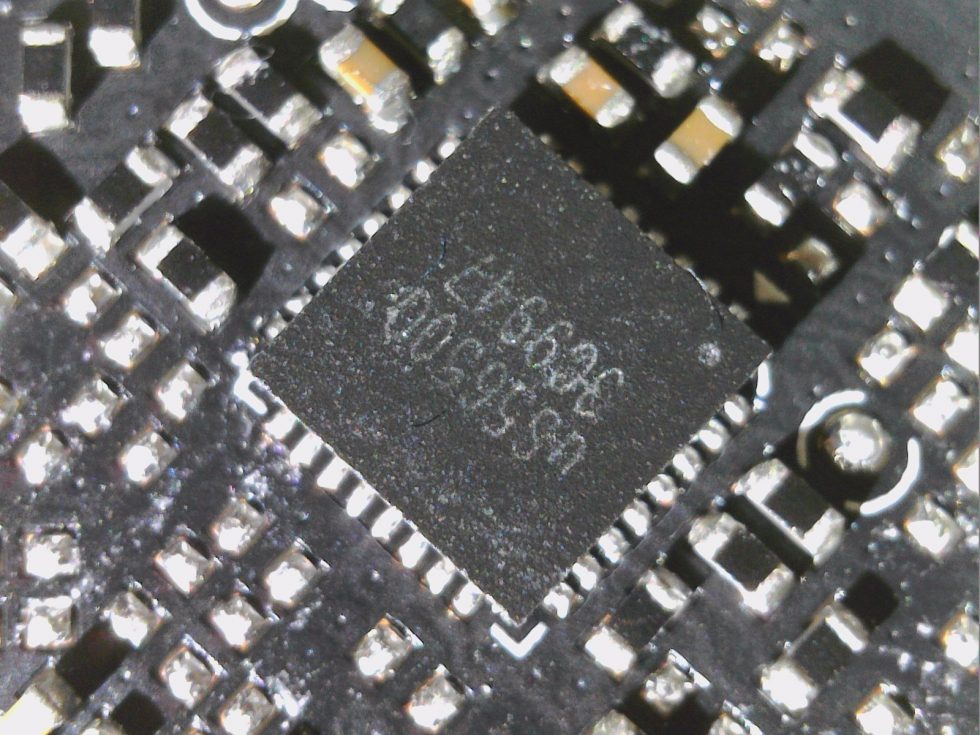

That’s why NVIDIA has reacted very quickly and since Turing has been using monitoring chips with a (theoretically) higher resolution by a factor of 1000. In the picture below we see UPI’s specially designed uS5650, which enables much shorter monitoring intervals, similar to On Semi’s NCP45491. However, only NVIDIA knows how short these really are in reality, because even in the specs and the base design kit for the board partners, such details are unfortunately not listed. So this is really secret, unfortunately.

Now, of course, one can monitor oneself to death and a too fast evaluation and calculation of the respective actual states also costs quite a lot of resources. So one can only assume that from about 1000 to 2000 FPS (i.e. already below 1 ms) inaccuracies could occur at identical render load, which finally do not include certain spikes anymore or one assumes a too low total load in the end. Shunt measurements in such short intervals are also subject to various errors, especially since the flowing currents are recorded on the primary side, i.e. before the voltage transformers, and the series coils and various capacitors are also located there. And it probably also explains why the measured power consumption can sometimes exceed the upper values for the power limit stored in the firmware (not only as a short peak).

Without going into more detail about the respective circuits, where I am also bound to various NDAs, one can definitely conclude that it must be a concern of the chip manufacturers to cut exactly that on the software side on the driver side, which does not always seem to be under control on the hardware side in such exceptional situations. This ranges from the power estimation engine in interaction with the all controlling arbitrator (firmware of the graphics cards) to the render pipeline and the respective interfaces of the drivers, because both NVIDIA and AMD already have functionally completely sufficient frame limiters.

These limiters could be set by default at 500 or 1000 FPS without affecting the latencies. If a user then picks this up at their own risk, it is solely (and subsequently in the event of shutdowns or damage) their own fault. Again, NVIDIA and AMD are equally affected, as the topic is too complex for a Sunday article. Here I will surely measure again more exactly.

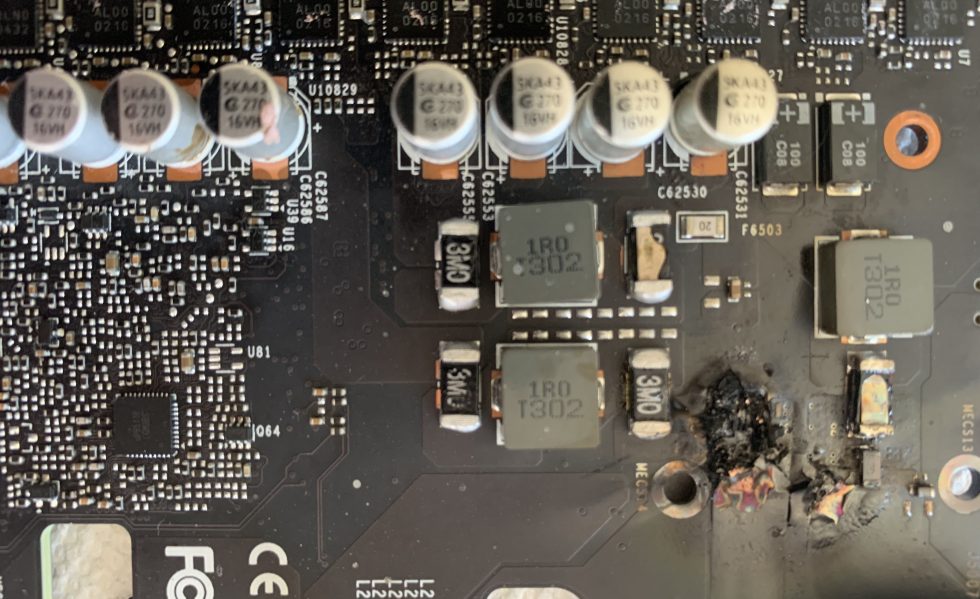

Anyone who tampers with shunts and thus renders protective circuits obsolete also deserves no sympathy whatsoever. The result then looked like this for “New World”, for example. It’s your own fault.

Summary and conclusion

It is certainly understandable for the reader that all parties involved did not push the whole thing further due to the expensive hardware and the current shortage. Especially since such a fragile limit sampling is also primarily the task of a manufacturer’s R&D department, in this case EVGA, and the customer must not mutate into a tester. It is commendable if the manufacturer offers an exchange of the defective cards. But due to the current facts, he would probably have no other choice anyway, which means that the noble gesture would then turn into a normal recall.

I can only advise any owner of one of the affected models to contact EVGA prophylactically when the fan problem occurs and not wait until it comes to a final damage. After several conversations with technicians of other board partners, one can also come to the conclusion that this problem should be solvable with a firmware update. One can only hope that EVGA will position itself more clearly here and not blame everything on Amazon again. Because that is exactly what is wrong, even if the PR has gratefully picked it up.

And to the rest, I like to give the advice not to become all problems tigether in a pot and to question certain rush information more critically. If you then use a frame limiter analogous to your monitor capabilities, you not only save energy and avoid the nasty coil whining, but you also avoid such emergency shutdowns caused by sluggish games or too high FPS numbers caused by too low graphics challenges in older and/or extremely simple games.

84 Antworten

Kommentar

Lade neue Kommentare

Neuling

Urgestein

Urgestein

Urgestein

1

Urgestein

Mitglied

Urgestein

Urgestein

Mitglied

Urgestein

Mitglied

Urgestein

1

Neuling

Urgestein

Veteran

Veteran

Alle Kommentare lesen unter igor´sLAB Community →